Google DeepMind officially released Gemma 4 on April 2, 2026, marking a major milestone in open-source AI. This family of models delivers frontier-level intelligence per parameter, built from the same research and technology powering Gemini 3. Unlike earlier Gemma versions with custom licenses, Gemma 4 ships under a fully permissive Apache 2.0 license, enabling unrestricted commercial use, modification, and redistribution.

Gemma 4 stands out for its multimodal capabilities (text + image inputs across all sizes, plus audio on edge models), native support for advanced reasoning and agentic workflows, long context windows up to 256K tokens, and optimization for everything from smartphones and Raspberry Pi to high-end GPUs. It supports over 140 languages and emphasizes efficiency, making powerful AI accessible on consumer and edge hardware without cloud dependency.

CometAPI provides excellent open-source and closed-source model APIs.

What Is Gemma 4?

Gemma 4 is Google DeepMind’s latest family of open multimodal large language models (LLMs), purpose-built for advanced reasoning, agentic AI workflows, and efficient on-device deployment. It maximizes “intelligence-per-parameter” by leveraging insights from proprietary Gemini 3 research while remaining fully open-weight and open-source.

Key advancements over prior Gemma models include:

- Native multimodality: Text + image understanding (all models), with audio support on smaller edge variants.

- Configurable thinking mode: Step-by-step reasoning with structured <|think|> output.

- Native function calling and tool use: Ideal for autonomous agents.

- Extended context: Up to 256K tokens on larger models.

- Hybrid attention architecture: Combines local sliding-window and global attention for efficiency and long-context performance.

- Per-Layer Embeddings (PLE) in smaller models and shared KV cache for memory savings.

- Broad multilingual support: Pre-trained on data covering 140+ languages with cultural nuance awareness.

Released under Apache 2.0, Gemma 4 removes previous license restrictions that limited enterprise adoption. Developers can now fine-tune, deploy, and commercialize without friction—positioning it as a direct competitor to fully open ecosystems like Llama and Qwen.

Gemma 4 targets diverse hardware: edge devices (phones, IoT, Raspberry Pi, Jetson Nano) for low-latency offline AI, and workstations/GPUs for high-performance local servers. This “local-first” design prioritizes privacy, cost savings, and zero-latency inference.

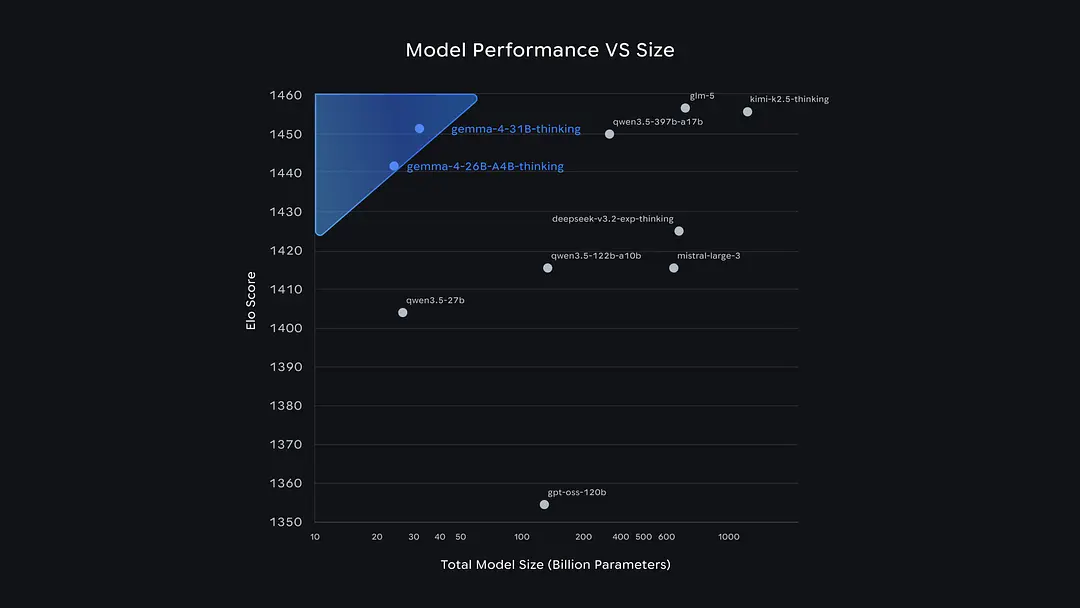

The open-source models ranked ahead of it on the Arena leaderboard are mainly from Chinese teams. Gemma 4 is not much different from Qwen 3.5 and GLM-5, but it is significantly different from OpenAI's GPT-OSS-120B.

Developers can now find GLM-5, Qwen 3.5, etc. on CometAPI.

The Four Versions of Gemma 4

Google released Gemma 4 in four carefully optimized sizes, each balancing performance, efficiency, and deployment scenarios. Two use dense architectures with innovative Per-Layer Embeddings (PLE) for edge efficiency; one is a Mixture-of-Experts (MoE) for high performance at low active-parameter cost; and one is a dense flagship.

| Model | Architecture | Total Params | Active Params (MoE) | Effective Params | Context Length | Modalities | Target Hardware |

|---|---|---|---|---|---|---|---|

| Gemma 4 E2B | Dense + PLE | ~5.1B (incl. embeddings) | N/A | 2.3B | 128K | Text, Image, Audio | Smartphones, Raspberry Pi, edge IoT |

| Gemma 4 E4B | Dense + PLE | ~8B (incl. embeddings) | N/A | 4.5B | 128K | Text, Image, Audio | Mobile devices, lightweight GPUs, Jetson |

| Gemma 4 26B A4B | MoE (8 active / 128 total + 1 shared) | 25.2B | 3.8B–4B | N/A | 256K | Text, Image | Workstations, consumer GPUs, local servers |

| Gemma 4 31B | Dense | 30.7B | N/A | N/A | 256K | Text, Image | High-end GPUs (fits on single H100/A100 in FP16) |

Gemma 4 E2B and E4B (edge-optimized): Use PLE to add per-layer specialization with minimal parameter overhead. Ideal for battery-powered or memory-constrained devices. Audio encoder (USM-style Conformer, ~300M params) enables speech-to-text and translation.

Gemma 4 26B A4B (MoE): Activates only ~4B parameters during inference despite 25B+ total size. Delivers near-31B performance at dramatically lower compute cost—perfect for cost-efficient scaling.

Gemma 4 31B (Dense): The flagship for maximum capability. Fits on a single 80GB GPU in full precision and ranks among the top open models on leaderboards.

All models include instruction-tuned (“-it”) variants optimized for chat, reasoning, and tool use, plus pre-trained base versions for fine-tuning. The two large models take different approaches: the 31B Dense model pursues ultimate quality and serves as the best foundation for fine-tuning; the 26B MoE model prioritizes speed, activating only 3.8 billion parameters during inference, resulting in much faster word generation, but with slightly lower overall quality.

The two smaller models, E2B and E4B, are specifically designed for mobile phones and IoT devices: they can run completely offline, saving memory and power. Moreover, these smaller models possess a capability that the larger models lack: native audio input, enabling direct speech recognition.

Core Capabilities of Gemma 4

Gemma 4 excels in areas that matter most for real-world AI applications:

1. Advanced Reasoning & Thinking Mode

Configurable step-by-step reasoning via system prompts or enable_thinking=True. Outputs structured <|think|> tags followed by final answers. Dramatically improves performance on complex tasks without extra fine-tuning.

2. Multimodal Understanding

- Vision: Object detection (JSON bounding boxes), OCR (multilingual), document/PDF parsing, chart comprehension, UI understanding, handwriting recognition, and variable-resolution image handling (token budgets: 70–1120 tokens).

- Video: Up to 60 seconds (1 fps frame processing).

- Audio (E2B/E4B only): Automatic speech recognition (ASR) and speech-to-text translation (max 30s).

- Interleaved inputs: Mix text, images, and audio in any order.

3. Agentic Workflows & Function Calling

Native tool-use support enables autonomous agents for multi-step planning, API calls, app navigation, and task completion. Strong on τ2-bench (agentic tool use).

4. Coding & Developer Tools

Exceptional code generation, completion, debugging, and repository-level understanding. Supports JSON-structured outputs for seamless integration. It scores 80.0% (31B) on LiveCodeBench v6, positioning itself as a local-first AI programming assistant suitable for offline development scenarios.

5. Long-Context & Multilingual

Handles 128K–256K tokens reliably (tested on MRCR needle-in-haystack). Pre-trained on diverse data up to January 2025 cutoff, with strong cross-lingual performance. This isn't just multilingual translation; it's natively trained and covers over 140 languages.

Benchmark Data: Gemma 4 Performance Breakdown

Gemma 4 sets new standards for open models. The 31B and 26B variants deliver scores once reserved for much larger proprietary systems, while edge models outperform Gemma 3’s larger predecessor.

Full Benchmark Results (Instruction-Tuned Models)

| Benchmark | Category | Gemma 4 31B | Gemma 4 26B A4B | Gemma 4 E4B | Gemma 4 E2B | Gemma 3 27B (no think) |

|---|---|---|---|---|---|---|

| MMLU Pro | Reasoning & Knowledge | 85.2% | 82.6% | 69.4% | 60.0% | 67.6% |

| AIME 2026 (no tools) | Math | 89.2% | 88.3% | 42.5% | 37.5% | 20.8% |

| GPQA Diamond | Graduate-level Science | 84.3% | 82.3% | 58.6% | 43.4% | 42.4% |

| Tau2 (avg) | Agentic Tool Use | 76.9% | 68.2% | 42.2% | 24.5% | 16.2% |

| LiveCodeBench v6 | Coding | 80.0% | 77.1% | 52.0% | 44.0% | 29.1% |

| Codeforces ELO | Competitive Coding | 2150 | 1718 | 940 | 633 | 110 |

| MMMU Pro | Multimodal Reasoning | 76.9% | 73.8% | 52.6% | 44.2% | 49.7% |

| MATH-Vision | Math + Vision | 85.6% | 82.4% | 59.5% | 52.4% | 46.0% |

| MRCR v2 (8-needle, 128K) | Long Context | 66.4% | 44.1% | 25.4% | 19.1% | 13.5% |

Key Insights:

- Massive leap from Gemma 3: The 31B model improves AIME math from 20.8% to 89.2% and LiveCodeBench from 29.1% to 80.0%.

- MoE efficiency: 26B A4B nearly matches 31B while using far less compute during inference.

- Edge dominance: E4B and E2B surpass Gemma 3 27B on many metrics despite being 6–10x smaller.

- Leaderboard rankings: 31B scores ~1452 on Arena AI (text); 26B A4B ~1441. The 26B variant reportedly outperforms much larger models like Qwen 3.5 397B in user preference and coding.

Vision and audio benchmarks confirm strong out-of-the-box multimodal performance without specialized fine-tuning.

Ecosystem and Tool Support

Gemma 4 enjoys immediate, broad ecosystem integration:

- Hugging Face: Day-one support with

transformers,pipeline("any-to-any"), GGUF, ONNX, and multimodal processors. - Local Runtimes: Ollama, Llama.cpp (LM Studio, Jan), MLX (Apple Silicon with TurboQuant), Mistral.rs (Rust), Transformers.js (WebGPU browser inference).

- Fine-Tuning: TRL, Unsloth, PEFT, Vertex AI, and full multimodal dataset support.

- Hardware Optimization: NVIDIA RTX/DGX Spark/Jetson (via TensorRT-LLM), Google AI Edge tools, and Android/iOS on-device deployment.

- Agent Frameworks: OpenClaw, Hermes, Pi, and CARLA simulation testing.

- Cloud/Studio: Google AI Studio for quick testing; Kaggle Models for download.

This ecosystem makes Gemma 4 deployable in minutes on laptops, servers, or edge devices.

Limitations & Safety:

- Training data cutoff: January 2025 (no real-time knowledge without tools).

- Audio limited to speech (not music); video capped at 60s.

- Hallucination risk remains—use thinking mode and verification.

- Safety: Rigorous filtering and evaluations per Google AI Principles; developers should add application-specific guards.

Why Gemma 4 Matters in 2026

Gemma 4 democratizes frontier AI. By combining multimodal intelligence, agentic capabilities, and Apache 2.0 freedom with hardware-agnostic efficiency, it empowers developers and enterprises to build secure, private, cost-effective AI solutions at scale. The intelligence-per-parameter breakthrough—especially evident in edge models outperforming yesterday’s flagship open models—signals a shift toward truly ubiquitous AI.

Whether running a 2B model on a phone or a 31B powerhouse locally, Gemma 4 proves open-source AI has caught up to (and in many cases surpassed) closed alternatives in practical utility.