On Feb 5, 2026 Anthropic unveiled Claude Opus 4.6, the newest flagship in the Claude family. Opus 4.6 doubles-down on long-horizon knowledge work and agentic software workflows: it ships with a beta 1,000,000 token context window, refined multi-agent coordination called Agent Teams, and an adaptive reasoning system (Adaptive Thinking) governed by an effort control. The model is available through the Claude Developer Platform and third-party aggregator APIs (for example, CometAPI) and is pitched as a drop-in upgrade for many Claude use cases.

What it is Claude Opus 4.6

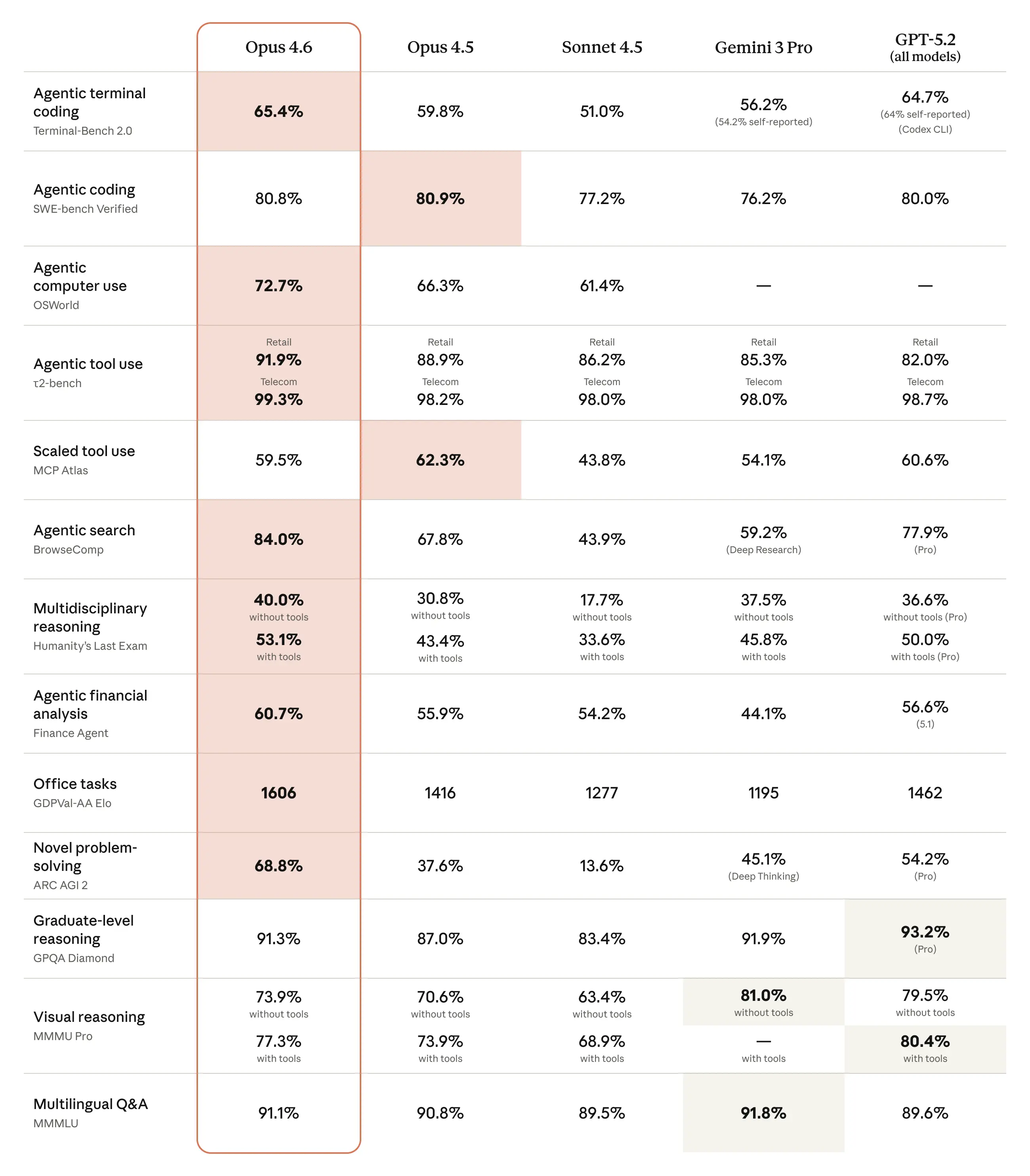

Claude Opus 4.6 is the latest Opus-class model from Anthropic, positioned as their most capable model to date for coding, agentic workflows, and long-context reasoning. The release prioritizes long-lived “agentic” tasks (think staged code migrations, multi-file refactors, or coordinated research agents), heavy document processing, and enterprise integrations. Anthropic describes Opus 4.6 as a near drop-in upgrade from 4.5, but with several behaviour and capability changes that are important for implementers.

Key capabilities of Claude Opus 4.6 to know right away

- 1M token context window (beta): Opus 4.6 introduces a very large context window (Anthropic offers it in beta), enabling the model to see and reason over extremely large documents or entire codebases in a single session. That makes tasks such as whole-repository refactors, long legal reviews, and multi-document synthesis much more practical.

- Agent Teams: Opus 4.6 expands on agent capabilities by enabling coordinated groups of agents (Agent Teams) — multiple Claude agents working in parallel on different subtasks and sharing state. This is designed to let systems decompose hard problems (e.g., one agent focusing on test creation, another on refactoring, a third on QA) and coordinate their outputs.

- Adaptive Thinking (effort levels): Instead of a binary “thinking” toggle, Opus 4.6 exposes multiple effort levels (e.g., low/medium/high/max) which trade latency and cost for deeper chain-of-thought and more deliberative reasoning. Anthropic also exposes control mechanisms like context compaction to manage long conversations efficiently.

- 128K Output Token Budget: Opus 4.6 doubles the previous maximum output budget (64K → 128K) so the model can deliver longer, sustained outputs without truncation — useful for multi-part reports or code generation spanning many files. Streaming is recommended for such large outputs.

Other practical improvements include better coding and debugging skills and mode/priority options designed for enterprise and integrated workflows (Copilot integration is already rolling out in places like GitHub Copilot).

Why these features matter (quick take)

- The 1M token window reduces the need for repeated retrieval cycles or stitching many documents into multiple calls — you can keep more context in a single call, which simplifies application logic for many knowledge-intensive workflows.

- Agent Teams change architecture: instead of a single monolithic assistant, you design small specialist agents that collaborate — easier parallelization, clearer responsibility, and potentially better reliability on complex tasks.

- Adaptive Thinking gives you predictable knobs for time vs. quality tradeoffs. That’s essential for production systems where latency, determinism, and cost are constraints.

How to call Claude Opus 4.6 via CometAPI — step-by-step

Using CometAPI to call Opus 4.6

Many teams prefer a unified multi-model gateway (when you want to normalize client code across vendors). CometAPI is one such provider that exposes many vendor models through a single OpenAI-compatible surface; and Anthropic's message format is also provided (when you need Anthropic's API-specific compression capabilities and want to use Claude Code via CometAPI). The examples below show patterns for production use: authentication, choosing a model, enabling long context features, streaming, and cost controls. (Adjust names and headers to match the provider’s model registry if Comet changes model identifiers.)

Getting started (developer checklist)

- register at CometAPI, obtain a

COMET_API_KEY, and set the clientbase_urltohttps://api.cometapi.com/v1(Comet offers OpenAI-compatible clients and examples). Comet’s console lists available models and any provider-specific flags you can pass through. - Decide capability settings up front:

thinking: {type: "adaptive"},output_config.effortlevel,max_tokens(output budget), streaming for big outputs, and whether context compaction is desired.

Claude API (Python-style pseudo):

import anthropic

import os

# Get your CometAPI key from https://api.cometapi.com/console/token, and paste it here

COMETAPI_KEY = os.environ.get("COMETAPI_KEY") or "<YOUR_COMETAPI_KEY>"

BASE_URL = "https://api.cometapi.com"

client = anthropic.Anthropic(

base_url=BASE_URL,

api_key=COMETAPI_KEY,

)

message = client.messages.create(

model="claude-opus-4-6",

max_tokens=1024,

messages=[{"role": "user", "content": "Hello, Claude"}],

)

print(message.content[0].text)

Via CometAPI (OpenAI-compatible shim example):

# Example using an OpenAI-like client pointed at CometAPI

from openai import OpenAI # or compatible client

client = OpenAI(api_key="COMET_KEY", base_url="https://api.cometapi.com/v1")

resp = client.responses.create(

model="claude-opus-4-6",

reasoning={"type":"adaptive"}, # if shim supports same param name

output_config={"effort":"medium"},

messages=[{"role":"user","content":"Generate a migration plan for this monorepo."}]

)

print(resp.output_text)

Note: parameter names in CometAPI wrappers vary by SDK. CometAPI documents a simple integration model and commonly supports

model="claude-opus-4-6"; check CometAPI docs for exact field mapping and any required request shape adjustments.

Best Practice and Usage

Agent Teams: design patterns and a short recipe

When to use Agent Teams: large codebase refactors, multi-stage document processing, and workflows that map naturally to separate specialist agents (e.g., architect, implementer, reviewer).

Simple Agent Teams pattern:

- Orchestrator agent receives the overall task and splits it into sub-tasks.

- Worker agents (each a Claude instance) are launched with focused prompts and explicit success criteria.

- Parallel work: workers run in parallel using independent contexts; results are returned to the orchestrator.

- Merge & review: orchestrator compacts outputs, runs a synthesis pass and a final safety/review check (using

effort=maxfor final pass if needed).

Practical tips:

- Give each subagent a strict system prompt and bounded

max_tokensto avoid runaway costs. - Use CometAPI or an orchestration framework to manage parallel calls and retries.

- Use context compaction for orchestrator history so you can preserve decisions without paying for full verbatim history.

Context management: handling large inputs and the 1M token window

- Prefer structured ingestion: feed documents as segmented pieces (document metadata + content blocks). Keep anchor points (document titles, indexes) and ask the model to cite sources by index. This is more robust than pasting raw files.

- Use context compaction (where available) for long interactive sessions: let the model summarize older turns so you don’t exhaust the token budget while retaining salient facts. Anthropic provides compaction as a beta capability.

- If you need deterministic recall, store canonical artifacts in your own DB and reference them by ID rather than re-pushing entire files each request. Use the model to summarize or extract only the portions you need for a given step.

Cost, latency and quality tradeoffs — using effort and other knobs

- Effort: the single most effective control to balance cost vs. capability. Start with

mediumfor production systems that require efficiency; usehighormaxfor critical audits, final reviews, or complex synthesis tasks.lowis useful for routine retrieval or short Q&A. Many teams report excellent cost savings by usingmediumas default and elevatingeffortonly when required. - Batch and cache: use prompt caching for repeated questions and batch processing for many small similar tasks to reduce token re-ingestion costs. Anthropic’s platform and third-party providers support prompt caching/batch modes.

- Streaming & chunked outputs: when requesting very large outputs (long code generation, book drafts), use streaming to reduce memory pressure and enable early acceptance/abort behavior.

Final thoughts — where Opus 4.6 changes the developer calculus

Opus 4.6 is a clear step toward building large, durable, agentic workflows without stitching many short requests together. The 1M token window and Agent Teams unlock new classes of applications (large codebase automation, long legal/financial reviews, multi-document research assistants), but they also shift the design emphasis from prompt-engineering micro-optimizations to system design: how you store artifacts, orchestrate specialists, measure and contain cost, and monitor agent behavior.

Developers can access Opus 4.6 via CometAPI now.To begin, explore the model’s capabilities in the Playground and consult the API guide for detailed instructions. Before accessing, please make sure you have logged in to CometAPI and obtained the API key. CometAPI offer a price far lower than the official price to help you integrate.

Ready to Go?→ Sign up fo openclaw today !

If you want to know more tips, guides and news on AI follow us on VK, X and Discord!

.png&w=3840&q=75)