Technical Specifications of GPT-5.4-Pro

| Item | GPT-5.4-Pro |

|---|---|

| Provider | OpenAI |

| Model family | GPT-5.4 |

| Model tier | Pro (high-compute reasoning variant) |

| Input types | Text, Image |

| Output types | Text |

| Context window | 1,050,000 tokens |

| Max output tokens | 128,000 tokens |

| Knowledge cutoff | Aug 31, 2025 |

| Reasoning levels | medium, high, xhigh |

| Tool support | Web search, file search, code interpreter, image generation |

| API support | Responses API (recommended) |

| Release | March 2026 |

What is GPT-5.4-Pro?

GPT-5.4-Pro is the highest-capability API variant of the GPT-5.4 model family, designed for extremely complex reasoning, research, coding, and enterprise automation tasks.

Compared with the standard GPT-5.4 model, GPT-5.4-Pro uses significantly more internal compute to “think harder” before producing responses, which leads to more accurate and reliable outputs for difficult problems.

The model is optimized for professional workloads such as financial analysis, software engineering, scientific research, and large-scale document reasoning.

Main Features of GPT-5.4-Pro

- Extreme reasoning performance: Uses additional compute to produce more precise answers on complex tasks.

- 1.05M token context window: Enables analysis of extremely large documents, datasets, or entire repositories.

- Configurable reasoning depth: Developers can control reasoning effort levels (

medium,high,xhigh). - Advanced tool orchestration: Works with web search, file retrieval, and other tools through the Responses API.

- Long-running reasoning support: Complex tasks may take minutes to complete due to deeper compute allocation.

- Enterprise reliability: Designed for high-stakes workflows requiring maximum answer accuracy.

Benchmark Performance

OpenAI reports significant improvements in professional reasoning benchmarks with GPT-5.4 models:

| Benchmark | GPT-5.4 | GPT-5.2 |

|---|---|---|

| GDPval (knowledge work) | 83.0% | 70.9% |

| OfficeQA | 68.1% | 63.1% |

| Investment Banking Modeling | 87.3% | 71.7% |

These improvements highlight GPT-5.4’s stronger performance on complex professional knowledge tasks and analytical reasoning workflows.

GPT-5.4-Pro further improves reliability by allocating more reasoning compute than the standard GPT-5.4 model.

GPT-5.4-Pro vs Comparable Models

| Model | Context Window | Key Strength |

|---|---|---|

| GPT-5.4-Pro | 1.05M tokens | Maximum reasoning accuracy |

| GPT-5.4 | 1.05M tokens | Balanced speed and capability |

| o3-pro | Smaller | Efficient reasoning |

| Gemini 3 Pro | ~1M tokens | Strong multimodal capabilities |

Key takeaway:

Use GPT-5.4-Pro when maximum reasoning accuracy matters more than latency or cost.

Limitations

- Higher latency due to deeper reasoning compute

- More expensive than standard GPT-5.4

- No audio or video generation

- Some long tasks may take minutes to complete

How to access GPT-5.4 pro API

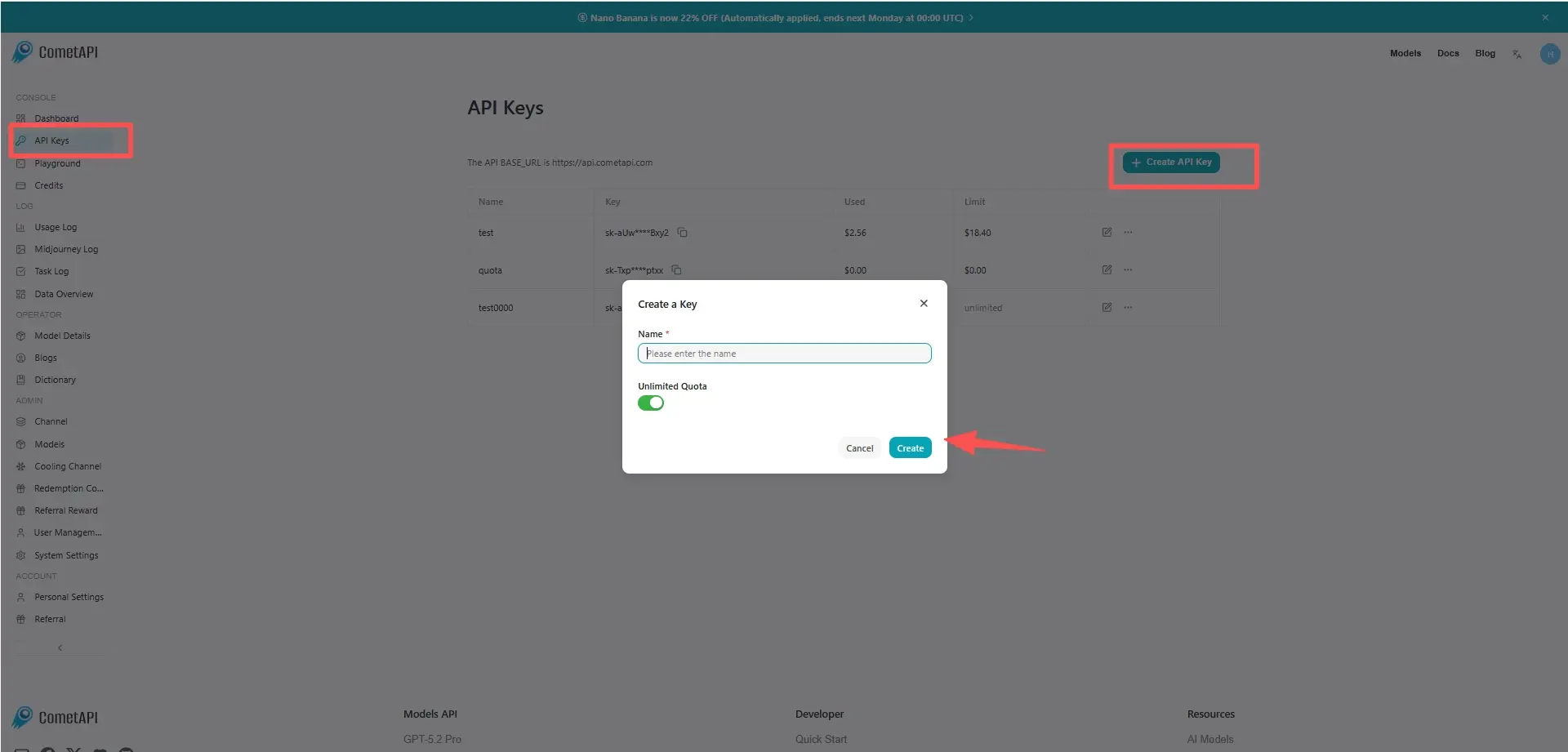

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to GPT-5.4 pro API

Select the “gpt-5.4-pro” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account. base url is Responses.

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.