Technical Specifications of GPT-5.4-2026-03-05

| Item | GPT-5.4-2026-03-05 |

|---|---|

| Model family | GPT-5 |

| Provider | OpenAI |

| Release date | March 5, 2026 |

| Context window | 1,050,000 tokens |

| Max output tokens | 128,000 |

| Input types | Text, Image |

| Output types | Text |

| Audio | Not supported |

| Reasoning controls | none, low, medium, high, xhigh |

| Tool support | Web search, File search, Code interpreter, Image generation |

| Knowledge cutoff | Aug 31, 2025 |

| Snapshot stability | Locked model behavior |

What is GPT-5.4?

GPT-5.4 is a unifying frontier release that merges improvements from recent reasoning and coding lines (including the GPT-5.3-Codex work) into a single model targeted at professional knowledge work. It is positioned as a “Thinking” model for deeper, steerable reasoning and a “Pro” variant for the highest performance/throughput customers. Key themes of the release are: (1) longer context and document-scale understanding, (2) improved tool and “computer use” capabilities (controlling apps, spreadsheet/presentation editing), and (3) reduced factual errors and stronger multi-step planning.

Main features of GPT-5.4

- Huge long-context capability (1M+ tokens experimental): GPT-5.4 supports experimental 1.05M token sessions (with pricing/limits) enabling whole-book / whole-codebase reasoning and multi-document synthesis. For general availability the standard window remains ≈272K tokens.

- Improved multi-step tool use & native “computer use”: better desktop/browser control for agentic workflows (keyboard/mouse via a computer-use interface), web search that persists across rounds, and a new Tool Search mechanism to find connectors/tools efficiently. OpenAI reports state-of-the-art success on multiple computer-use and web-agent benchmarks.

- Spreadsheet, document, and presentation generation/editing: specific tuning for office workflows; internal benchmarks show major gains on spreadsheet modelling and presentation quality. OpenAI also launched a ChatGPT for Excel add-in alongside the release.

- Steerability & reasoning modes: “Thinking” mode produces an explicit plan/preamble for long tasks and supports mid-response steering (adjusting instructions during generation). Reasoning effort levels let users trade latency for deeper chain-of-thought reasoning.

- Enhanced multimodal understanding: better interpretation of high-resolution images and charts (image input), used for document understanding and presentations.

- Safety posture: OpenAI treats GPT-5.4 as a high-cyber-capability model and deploys enhanced safeguards similar to the GPT-5.3-Codex mitigations.

Benchmark performance

| GPT-5.4 | GPT-5.3-Codex | GPT-5.2 | |

|---|---|---|---|

| GDPval (wins or ties) | 83.0% | 70.9% | 70.9% |

| SWE-Bench Pro (Public) | 57.7% | 56.8% | 55.6% |

| OSWorld-Verified | 75.0% | 74.0%* | 47.3% |

| Toolathlon | 54.6% | 51.9% | 46.3% |

| BrowseComp | 82.7% | 77.3% | 65.8% |

GPT-5.4 vs Comparable Models

| Model | Context Window | Key Strength |

|---|---|---|

| GPT-5.4-2026-03-05 | 1,050,000 tokens | Frontier reasoning + agent workflows |

| GPT-5.3 Instant | Smaller | Faster everyday tasks |

| Claude Opus / Sonnet | ~200k tokens | Long-form reasoning |

| Gemini 3 Pro | ~1M tokens | Multimodal reasoning |

Key difference: GPT-5.4 focuses heavily on professional productivity workflows and agent capabilities, particularly when integrated with external tools.

Representative production use cases

- Enterprise document & compliance workflows: processing long contracts, extracting obligations, and drafting commentaries across multi-document corpora (benefits from the 272K→1M context options for single-session synthesis).

- Spreadsheet automation & financial modelling: generating formulas, building multi-sheet models from plain-English spec, reconciling inputs — OpenAI reports large gains on junior investment-banking style tasks.

- Agentic automation & “computer use”: automated browser / desktop workflows (installation, QA, tool orchestration) and multi-step tool chains (Zapier integrations cited as a use partner).

- Software engineering & code maintenance: code generation, refactorings, and terminal/CLI agent tasks (Terminal-Bench gains reported). For large codebases, the long context window helps but must be validated on task heuristics.

- Knowledge worker augmentation: research synthesis (BrowseComp improvements), slide generation and visual design for presentations.

How to access GPT-5.4 API

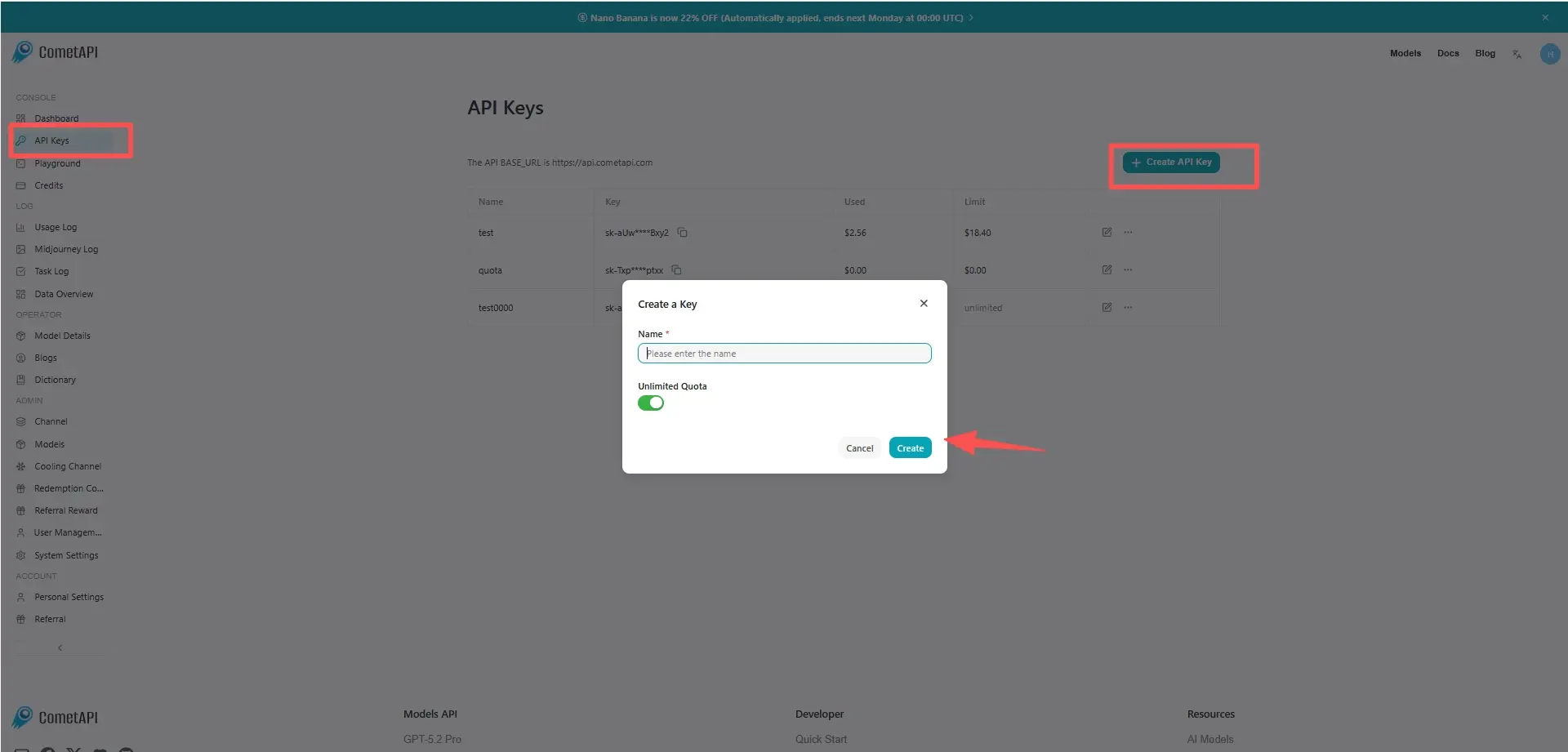

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to GPT-5.4 API

Select the “gpt-5.4” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account. base url is Chat Completions and Responses.

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.