Claude Opus 4.7, released April 16, 2026, is a significant upgrade over Opus 4.6 in coding, agentic workflows, vision, and instruction-following. It scores +6.8pp on SWE-bench Verified (87.6% vs 80.8%), +10.9pp on SWE-bench Pro (64.3% vs 53.4%), +12pp on CursorBench (70% vs 58%), and delivers 3.3× higher-resolution vision with self-verification loops that reduce hallucinations on long tasks. Pricing stays identical officially ($5/$25 per million tokens), but low-effort 4.7 matches medium-effort 4.6 quality, cutting real-world costs.

On CometAPI, you get both models(Claude Opus 4.7 and Opus 4.6) at $4 input / $20 output with OpenAI-compatible endpoints and zero vendor lock-in. Upgrade if you run production coding agents, complex document analysis, or multi-session workflows—4.7 is the new default for frontier work.

Claude Opus 4.7 vs Opus 4.6: Quick comparison

Bottom line: Opus 4.7 feels like “Opus 4.6 but unthrottled and refined.” It removes limitations that occasionally appeared in 4.6 (e.g., premature task abandonment, lower visual acuity) while adding efficiency through adaptive reasoning. Users report it is more “opinionated” and collaborative—like working with a senior engineer who double-checks their own work.

Why Claude Opus 4.7 Matters in 2026

On April 16, 2026, Anthropic quietly dropped its most capable generally available model yet: Claude Opus 4.7. Just weeks after the restricted Mythos Preview (a cyber-focused powerhouse), Opus 4.7 reclaims the crown for production workloads while keeping the exact same pricing as Opus 4.6.

Developers and enterprises no longer need to babysit the hardest coding tasks. Users report handing off “the kind that previously needed close supervision” to 4.7 with confidence. The model now self-verifies outputs, follows instructions literally, and sustains multi-hour agentic runs with fewer tool errors and better error recovery.

The model excels at:

- Rigorous long-running tasks with built-in self-verification (Plan → Execute → Verify → Report).

- Literal instruction following—no more loose interpretations of “consider” or “you might.”

- Substantially better vision (up to 2,576 px long edge ≈ 3.75 MP, more than 3× prior resolution).

- Higher taste and creativity in professional outputs like interfaces, slides, and docs.

- Improved file-system memory for true multi-session autonomy.

New features include an xhigh effort level (between high and max), task budgets on the Platform API, and Claude Design tool integration. The model ID is now claude-opus-4-7. Pricing is unchanged officially, but token efficiency improvements often lower effective cost per task.

Core Capability Improvements – What Actually Changed

Advanced Software Engineering & Agentic Coding

Opus 4.7 shines on the hardest problems. On a 93-task internal coding benchmark, it achieved a 13% resolution lift over 4.6, solving four tasks neither 4.6 nor Sonnet 4.6 could crack. Rakuten-SWE-Bench showed 3× more production-grade tasks resolved without human intervention. CursorBench (real IDE workflows) jumped +12 points to 70%.

Internal 93-task coding benchmark showed a 13% lift, solving four tasks neither 4.6 nor Sonnet 4.6 could crack. In agentic workflows, Box reported 2× fewer LLM calls (7.1 vs 16.3) and 30% lower AI-unit usage for the same output—translating directly to cost and latency wins.

Why it matters for developers: You can now trust Opus 4.7 with “the hardest coding work” that previously needed supervision. It pays precise attention to instructions, verifies its own outputs, and reuses file-system memory across sessions—perfect for autonomous refactoring over days.

Real-world wins include:

- Autonomous Rust text-to-speech engine from a single prompt.

- Fixed race conditions and concurrency bugs that stumped prior models on Terminal-Bench 2.0 (+4.0 pp).

- 10–15% lift in Factory Droids task success with ⅓ fewer tool errors.

- Double-digit improvements in code quality, test quality, and review accuracy (CodeRabbit, Qodo).

Low-effort 4.7 now matches medium-effort 4.6 quality, so you get more done for the same (or lower) token spend.

Vision & Multimodal Leap

This is the biggest single upgrade. Maximum image resolution jumps from 1.15 MP (1568 px) to 3.75 MP (2576 px on the long edge) — a 3.3× pixel increase with 1:1 coordinate mapping. No more scale-factor math for screenshots or diagrams.

Results:

- Visual-acuity benchmark: 98.5% vs 54.5% on 4.6.

- CharXiv-R (no tools): +13.4 pp; with tools: +13.6 pp.

- Unlocks pixel-perfect computer-use agents, dense screenshot analysis, chemical-structure parsing, and UI/UX design review.

Agentic Workflows, Reliability & Instruction Following

Opus 4.7 introduces native self-verification—the model plans, executes, verifies, then reports. This dramatically reduces confident-but-wrong answers on long-horizon tasks. File-system memory improvements enable true multi-day autonomy.

Instruction following is stricter and more literal. Prompts tuned for 4.6’s looser style may need auditing—phrases like “consider” are now treated as hard requirements. This is a feature for precision-critical work but requires prompt migration.

Note on regressions: Long-context needle retrieval (MRCR) dropped notably (e.g., 91.9% → 59.2% at 256K). Anthropic notes they are phasing out such synthetic tests in favor of applied GraphWalks metrics, where real code comprehension remains strong.

New xhigh Effort Level + Task Budgets

Opus 4.7 adds xhigh between high and max for granular control. Claude Code now defaults to xhigh across plans. The new task_budget (public beta) lets the model track total tokens across an entire agentic loop and finish gracefully.

Instruction Following, Self-Verification & Memory

Opus 4.7 interprets prompts more literally — great for precision, but old vague prompts may need tightening. It now devises its own verification steps (Plan → Execute → Verify → Report) and reuses file-system memory across multi-session work far better than 4.6. For teams building persistent agents, this is one of the most useful upgrades because it reduces re-explaining, reloading, and re-planning.

Tokenizer Update

New tokenizer improves quality but can consume 1.0–1.35× more tokens (up to +35%). Token counting endpoint now returns different numbers. Net effect: higher quality per task often offsets the increase, especially at lower effort levels.

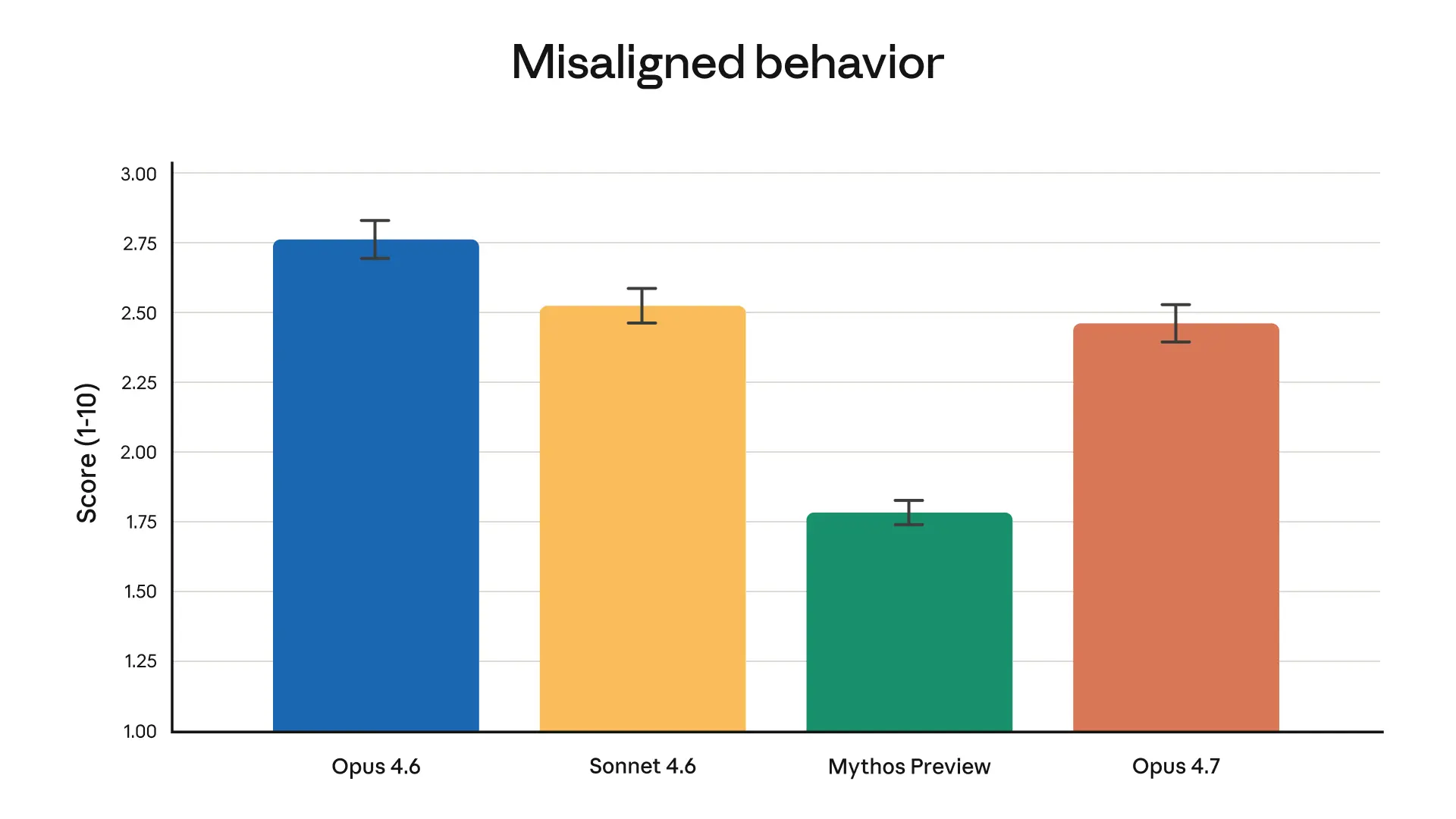

Safety, Alignment & Cybersecurity

Safety profile is similar to 4.6 (low misalignment), with modest improvements in honesty and prompt-injection resistance.

Opus 4.7 ships Project Glasswing safeguards: real-time blocking of prohibited/high-risk cyber uses. CyberGym score intentionally flat. Misaligned behavior modestly improved over 4.6. Full system card available on Anthropic’s site.

Pricing, Token Efficiency & CometAPI Savings

Official pricing is identical, but effective cost per task drops because low-effort 4.7 ≈ medium-effort 4.6 quality, and higher success rates mean fewer retries. The new tokenizer increases input tokens 0–35% for identical text, but net usage is often favorable at matched quality.

CometAPI advantage: Access both models at $4 input / $20 output per million tokens—20% cheaper than official—plus seamless switching between 500+ models (GPT-5.4, Gemini 3.1, etc.) via a single OpenAI-compatible or Anthropic Messages endpoint. No downtime if providers change pricing. Zero vendor lock-in. Playground testing and unified billing make migration effortless.

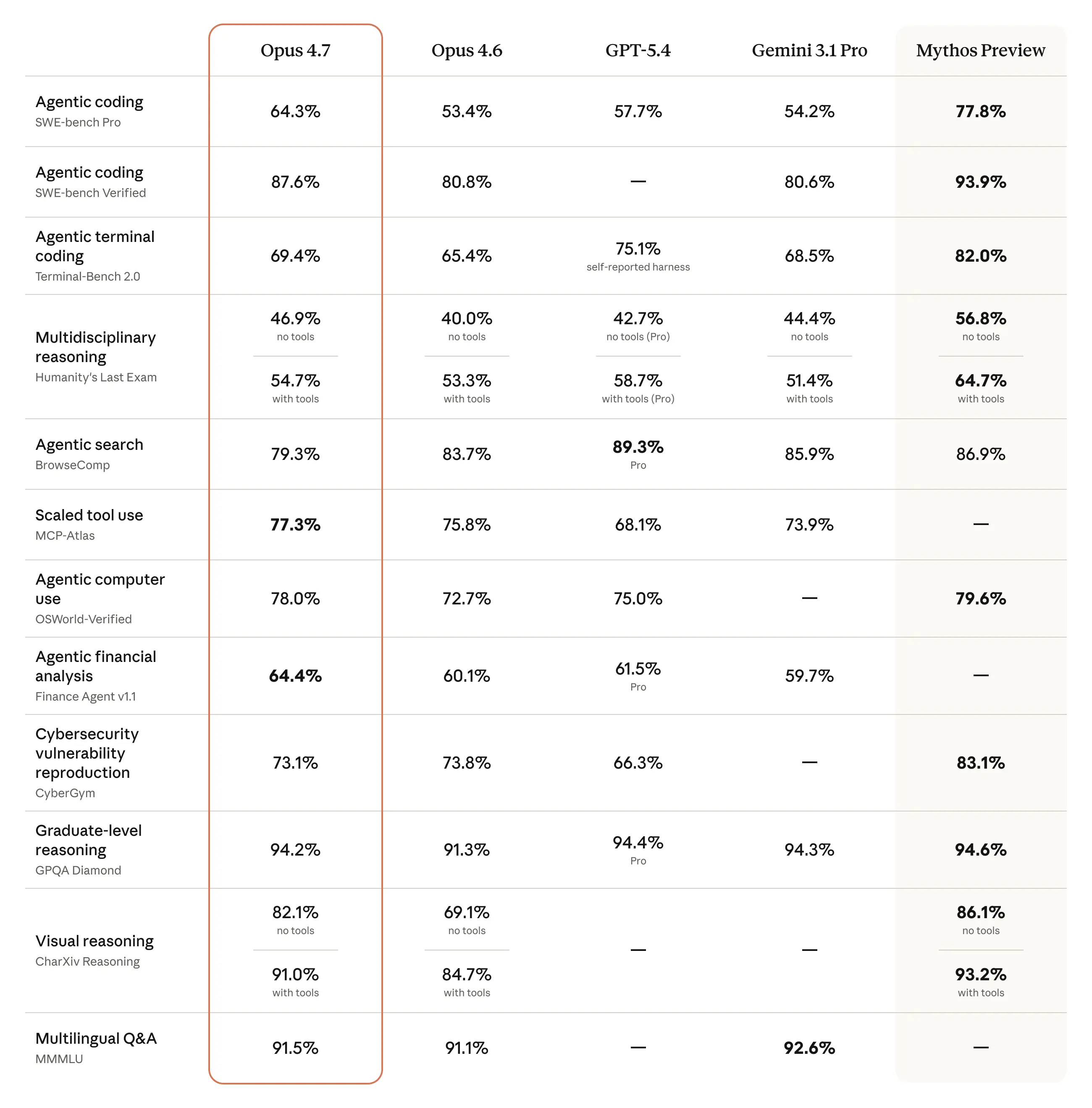

Side-by-Side Benchmark Deep Dive

Here is the complete 14-benchmark head-to-head from Anthropic’s launch data (verified by partners):

Coding Benchmarks

- SWE-bench Verified: 80.8% → 87.6% (+6.8 pp)

- SWE-bench Pro: 53.4% → 64.3% (+10.9 pp)

- Terminal-Bench 2.0: 65.4% → 69.4% (+4.0 pp)

Agentic & Tool-Use

- MCP-Atlas: 62.7% → 77.3% (+14.6 pp) — largest single jump

- OSWorld-Verified: 72.7% → 78.0% (+5.3 pp)

- Finance Agent: 60.7% → 64.4% (+3.7 pp)

Reasoning & Knowledge

- GPQA Diamond: 91.3% → 94.2% (+2.9 pp)

- HLE (no tools): 40.0% → 46.9% (+6.9 pp)

- MMMLU: 91.1% → 91.5% (+0.4 pp)

Vision

- CharXiv-R (no tools): 68.7% → 82.1% (+13.4 pp)

- CharXiv-R (tools): 77.4% → 91.0% (+13.6 pp)

Regressions (transparent)

- BrowseComp: 84.0% → 79.3% (–4.7 pp) — harness-sensitive

- CyberGym: 73.8% → 73.1% (–0.7 pp) — intentional for safety

Internal Research-Agent Benchmark: 0.715 overall (tied top score), with Finance module jumping from 0.767 to 0.813.

Real-World Performance & Use Cases

Box’s agentic workflow tests showed Opus 4.7 completing tasks with 7.1 LLM calls vs 16.3 for 4.6 (2.3× fewer) and 30% lower AI Unit usage. Latency dropped from 242 s to 183 s median.

Enterprise partners (Harvey, Databricks, Hebbia, Ramp, Genspark) report:

- 21% fewer errors in document reasoning.

- Better multi-agent coordination over hours.

- Tighter integration of slide decks, spreadsheets, and code.

Who Should Upgrade Immediately?

- Software engineering teams using Cursor/Claude Code.

- AI agent builders needing reliable long-horizon autonomy.

- Vision-heavy workflows (screenshots, diagrams, UI review).

- Finance, legal, and knowledge-work automation.

API Changes, Migration Guide & Code Examples

Breaking Changes (Messages API)

- Extended thinking budgets removed → use

thinking: {"type": "adaptive"}. - Sampling params (

temperature, etc.) no longer accepted → use prompting. - Thinking content omitted by default.

- New tokenizer requires headroom in

max_tokens.

Migration Guide + Code Examples (CometAPI)

Step 1: Update model name to claude-opus-4-7 (or CometAPI alias).

Step 2: Audit prompts for literal interpretation.

Step 3: Test effort levels (start with xhigh for coding).

Step 4: Use task budgets to cap spend.

Here’s a ready-to-run Python example using CometAPI’s Anthropic-compatible endpoint (works with official SDK too):

(Python)

import anthropic

import os

client = anthropic.Anthropic(

api_key=os.getenv("COMETAPI_KEY"), # Your CometAPI sk- key

base_url="https://www.cometapi.com/console/" # CometAPI base

)

message = client.messages.create(

model="claude-opus-4-7", # or "claude-opus-4-6" for comparison

max_tokens=4096,

temperature=0.7,

effort="xhigh", # New level for deep reasoning

messages=[

{

"role": "user",

"content": [

{"type": "text", "text": "Refactor this legacy Python module into clean, type-hinted, testable code. Follow instructions literally: use Pydantic v2, add comprehensive tests, no external deps beyond stdlib + pydantic. Verify your changes before responding."},

{"type": "image", "source": {"type": "base64", "media_type": "image/png", "data": "iVBORw0KGgoAAAANSUhEUg..."} } # High-res screenshot support

]

}

]

)

print(message.content[0].text)

Self-verification demo prompt (works far better on 4.7):

(text):

Plan → Execute → Verify → Report:

1. Analyze the attached codebase.

2. Propose refactors.

3. Implement changes in a new file.

4. Run mental unit tests and edge cases.

5. Only output final verified code if all checks pass.

Run A/B tests on your own workloads—most teams see 20-40% fewer iterations.

Note:

First, The new tokenizer generates more tokens from the same text. Opus 4.7 introduced a new tokenizer, improving how the model processes text. The trade-off is that the same input will map to more tokens; the exact number depends on the content type, but is roughly between 1.0 and 1.35 times.

Secondly Higher effort levels allow for more comprehensive consideration, especially in multi-turn agent scenarios.

This leads to better reliability, but also more output tokens.

The official solution provides three approaches:

- Adjusting the effort level using the

efficiencyparameter - Limiting the budget using task budgets

- Telling the model to "be more concise" in the prompt.

Known limitations and migration notes

- Extended thinking budgets removed → use

thinking: {"type": "adaptive"}.thinking: {type: "enabled", budget_tokens: N}is no longer supported; use adaptive thinking instead. - Sampling params (

temperature, etc.) no longer accepted → use prompting.temperature,top_p, andtop_kshould be removed from requests when migrating to Opus 4.7. - The model is described as more literal and more direct than Opus 4.6, which is useful for precision but may require sharper prompts.

- New tokenizer requires headroom in

max_tokens. Anthropic recommends re-checkingmax_tokensheadroom because Opus 4.7 can produce higher token counts for the same text. - Thinking content omitted by default.

Final Verdict & Recommendation

Claude Opus 4.7 is the clear winner for any serious coding, agentic, or vision workload in 2026. The gains are not incremental — they are production-transforming. If you’re on Opus 4.6, migrate this week. The combination of higher quality, fewer calls, and identical (or lower via CometAPI) pricing makes it a no-brainer.

Action steps:

- Test 4.7 on CometAPI’s playground with your real workloads.

- Update one service first (Cursor or your agent framework).

- Monitor token usage for the first week.

- Scale confidently knowing you have unified, cheaper access across 500+ models.