📊 Technical Specifications

| Specification | Details |

|---|---|

| Model family | Gemini 3 (Flash-Lite) |

| Context window | Up to 1 million tokens (multimodal text, images, audio, video) |

| Output token limit | Up to 64 K tokens |

| Input types | Text, images, audio, video |

| Core architecture basis | Based on Gemini 3 Pro |

| Deployment channels | Gemini API (Google AI Studio), Vertex AI |

| Pricing (preview) | ~$0.25 per 1M input tokens, ~$1.50 per 1M output tokens |

| Reasoning controls | Adjustable “thinking levels” (e.g., minimal to high) |

🔍 What Is Gemini 3.1 Flash-Lite?

Gemini 3.1 Flash-Lite is the cost-effective footprint variant of Google’s Gemini 3 series, optimized for massive AI workloads at scale—especially where reduced latency, lower per-token cost, and high throughput are priorities. It preserves the core multimodal reasoning backbone of Gemini 3 Pro while targeting bulk processing use cases like translation, classification, content moderation, UI generation, and structured data synthesis.

✨ Main Features

- Ultra-Large Context Window: Handles up to 1 M tokens of multimodal input, enabling long-document reasoning and video/audio context processing.

- Cost-Efficient Execution: Significantly lower per-token costs compared to earlier Flash-Lite models and competitors, enabling high-volume usage.

- High Throughput & Low Latency: ~2.5× faster time-to-first-token and ~45 % faster output throughput over Gemini 2.5 Flash.

- Dynamic Reasoning Controls: “Thinking levels” let developers tune performance vs deeper reasoning on a per-request basis.

- Multimodal Support: Native processing of images, audio, video, and text within a unified context space.

- Flexible API Access: Available via Gemini API in Google AI Studio and enterprise Vertex AI workflows.

📈 Benchmark Performance

The following metrics showcase Gemini 3.1 Flash-Lite’s efficiency and capability compared with earlier Flash/Lite variants and other models (reported March 2026):

| Benchmark | Gemini 3.1 Flash-Lite | Gemini 2.5 Flash Dynamic | GPT-5 Mini |

|---|---|---|---|

| GPQA Diamond (scientific knowledge) | 86.9 % | 66.7 % | 82.3 % |

| MMMU-Pro (multimodal reasoning) | 76.8 % | 51.0 % | 74.1 % |

| CharXiv (complex chart reasoning) | 73.2 % | 55.5 % | 75.5 % (+python) |

| Video-MMMU | 84.8 % | 60.7 % | 82.5 % |

| LiveCodeBench (code reasoning) | 72.0 % | 34.3 % | 80.4 % |

| 1M Long-Context | 12.3 % | 5.4 % | Not supported |

These scores indicate that Flash-Lite maintains competitive reasoning and multimodal understanding even with its efficiency-oriented design, often outperforming older Flash variants across key benchmarks.

⚖️ Comparison to Related Models

| Feature | Gemini 3.1 Flash-Lite | Gemini 3.1 Pro |

|---|---|---|

| Cost per token | Lower (entry tier) | Higher (premium) |

| Latency / throughput | Optimized for speed | Balanced with depth |

| Reasoning depth | Adjustable, but shallower | Stronger deep reasoning |

| Use case focus | Bulk pipelines, moderation, translation | Mission-critical reasoning tasks |

| Context window | 1 M tokens | 1 M tokens (same) |

Flash-Lite is tailored for scale and cost; Pro is for high-precision, deep reasoning.

🧠 Enterprise Use Cases

- High-Volume Translation & Moderation: Real-time language and content pipelines with low latency.

- Bulk Data Extraction & Classification: Large corpora processing with efficient token economics.

- UI/UX Generation: Structured JSON, dashboard templates, and front-end scaffolding.

- Simulation Prompting: Logical state tracking across extended interactions.

- Multimodal Applications: Video, audio, and image informed reasoning within unified contexts.

🧪 Limitations

- Depth of reasoning and analytical precision may lag behind Gemini 3.1 Pro in complex, mission-critical tasks. :

- Benchmark results like long-context fusion show room for improvement relative to flagship models.

- Dynamic reasoning controls trade off speed for thoroughness; not all levels guarantee the same output quality.

GPT-5.3 Chat (Alias: gpt-5.3-chat-latest) — Overview

GPT-5.3 Chat is the latest production chat model from OpenAI, offered as the gpt-5.3-chat-latest endpoint in the official API and powering ChatGPT’s day-to-day conversational experience. It focuses on improving everyday interaction quality—making responses smoother, more accurate, and better contextualized—while maintaining strong technical capabilities inherited from the broader GPT-5 family. :contentReference[oaicite:1]{index=1}

📊 Technical Specifications

| Specification | Details |

|---|---|

| Model name/alias | GPT-5.3 Chat / gpt-5.3-chat-latest |

| Provider | OpenAI |

| Context window | 128,000 tokens |

| Max output tokens per request | 16,384 tokens |

| Knowledge cutoff | August 31, 2025 |

| Input modalities | Text and image inputs (vision only) |

| Output modalities | Text |

| Function calling | Supported |

| Structured outputs | Supported |

| Streaming responses | Supported |

| Fine-tuning | Not supported |

| Distillation / embeddings | Distillation not supported; embeddings supported |

| Typical use endpoints | Chat completions, Responses, Assistants, Batch, Realtime |

| Function calling & tools | Function calling enabled; supports web & file search via Responses API |

🧠 What Makes GPT-5.3 Chat Unique

GPT-5.3 Chat represents an incremental refinement of Chat-oriented capabilities in the GPT-5 lineage. The core goal of this variant is to provide more natural, contextually coherent, and user-friendly conversational responses than earlier models like GPT-5.2 Instant. Improvements are oriented toward:

- Dynamic, natural tone with fewer unhelpful disclaimers and more direct answers.

- Better context understanding and relevance in common chat scenarios.

- Smoother integration with rich chat use cases including multi-turn dialogue, summarization, and conversational assistance.

GPT-5.3 Chat is recommended for developers and interactive applications that need the latest conversational improvements without the specialized reasoning depth of future “Thinking” or “Pro” GPT-5.3 variants (which are forthcoming).

🚀 Key Features

- Large Chat Context Window: 128K tokens enables rich conversation histories and long context tracking. :contentReference[oaicite:17]{index=17}

- Improved Response Quality: Refined conversational flow with fewer unnecessary caveats or overly cautious refusals. :contentReference[oaicite:18]{index=18}

- Official API Support: Fully supported endpoints for chat, batch processing, structured outputs, and real-time workflows.

- Versatile Input Support: Accepts and contextualizes text and image inputs, suitable for multimodal chat use cases.

- Function Calling & Structured Output: Enables structured and interactive application patterns via the API. :contentReference[oaicite:21]{index=21}

- Broad Ecosystem Compatibility: Works with v1/chat/completions, v1/responses, Assistants, and other modern OpenAI API interfaces.

📈 Typical Benchmarks & Behavior

📈 Benchmark Performance

OpenAI and independent reports show improved real-world performance:

| Metric | GPT-5.3 Instant vs GPT-5.2 Instant |

|---|---|

| Hallucination rate with web search | −26.8% |

| Hallucination rate without search | −19.7% |

| User-flagged factual errors (web) | ~−22.5% |

| User-flagged factual errors (internal) | ~−9.6% |

Notably, GPT-5.3’s focus on real-world conversational quality means benchmark score improvements (like standardized NLP metrics) are less of a release highlight — improvements show up most clearly in user experience metrics instead of raw test scores.

In industry comparisons, GPT-5-family chat variants are known to outperform earlier GPT-4 modules on everyday chat relevance and contextual tracking, though specialized reasoning tasks may still favor dedicated “Pro” variants or reasoning-optimized endpoints.

🤖 Use Cases

GPT-5.3 Chat is well-suited for:

- Customer support bots and conversational assistants

- Interactive tutorial or educational agents

- Summarization and conversational search

- Internal knowledge agents and team chat helpers

- Multimodal Q&A (text + images)

Its balance of conversational quality and API versatility makes it ideal for interactive applications that combine natural dialogue with structured data outputs.

🔍 Limitations

- Not the deepest reasoning variant: For mission-critical, high-stakes analytical depth, forthcoming GPT-5.3 Thinking or Pro models may be more appropriate.

- Multimodal outputs limited: While input images are supported, full image/video generation or rich multimodal output workflows are not the primary focus of this variant.

- Fine-tuning is not supported: You cannot fine-tune this model, though you can steer behavior via system prompts.

How to access Gemini 3.1 flash lite API

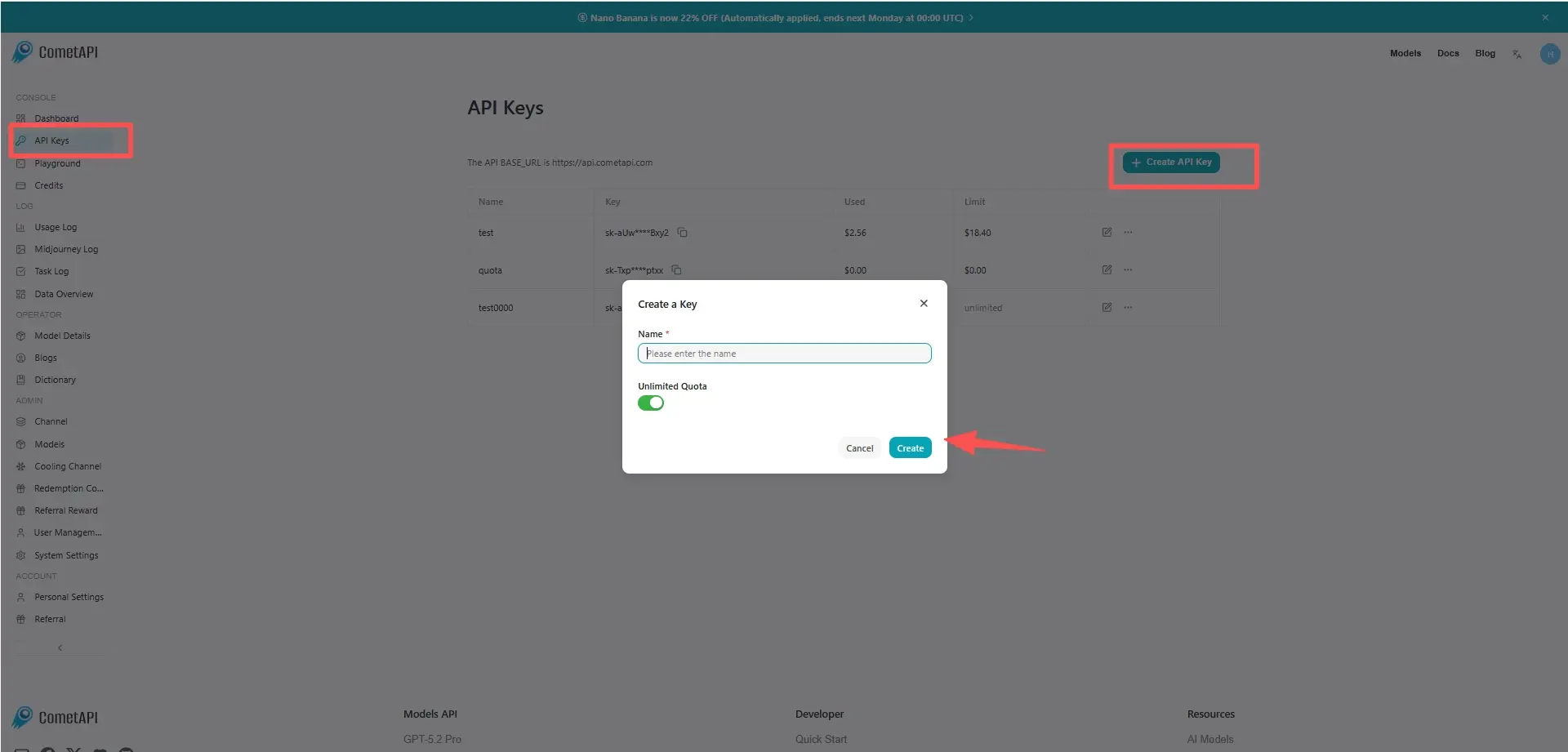

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to Gemini 3.1 flash lite API

Select the “` gemini-3.1-flash-lite” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account. base url is Gemini Generating Content

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.