Xiaomi expanded MiMo from a single model release into a three-model lineup aimed at different product needs. Flash arrived on December 16, 2025 as an open-sourced MoE model for reasoning, coding, and agentic tasks, while Pro and Omni were officially unveiled on March 18, 2026 as the flagship reasoning model and the full multimodal model, respectively.

What Is MiMo V2 and Why it Matter?

Xiaomi’s MiMo V2 series represents the Chinese tech giant’s push into frontier AI foundation models optimized for real-world agentic workloads. Released in phases (Flash in late 2025/early 2026, followed by Pro and Omni on March 18, 2026), the lineup leverages Mixture-of-Experts (MoE) architecture for efficiency: massive total parameters with far fewer active during inference.

MiMo-V2-Omni: The “eyes and ears” – unified multimodal model fusing text, vision, video, and extended audio.

MiMo-V2-Flash: The “fast worker” – lightweight, open-source, ultra-affordable.

MiMo-V2-Pro: The “reasoning flagship” – trillion-parameter brain for complex, multi-step tasks.

All models emphasize tool-calling, long-context reasoning, and integration with agent frameworks like OpenClaw, OpenCode, and KiloCode. They achieve this at dramatically lower prices than equivalents from OpenAI, Anthropic, or Google—often 5-10x cheaper—while ranking among global and Chinese leaders on key benchmarks.

MiMo V2-Omni vs MiMo V2-Pro vs MiMo V2-Flash: Quick Comparison

| Feature / Metric | MiMo-V2-Flash | MiMo-V2-Pro | MiMo-V2-Omni |

|---|---|---|---|

| Release | Dec 2025 | Mar 18, 2026 | Mar 19, 2026 |

| Parameters | 309B total / 15B active (MoE) | ~1T total / 42B active (MoE) | Multimodal (exact params undisclosed) |

| Context Window | 256K tokens | 1M tokens (tiered pricing) | 256K tokens |

| Primary Strength | Speed & cost (coding/agents) | Reasoning & complex agents | Multimodal perception (vision/audio) |

| Benchmarks (Key Examples) | SWE-Bench: 73.4% (#1 open-source); Artificial Analysis: ~41 | ClawEval: 61.5 (#3 global); PinchBench: 81.0; Global rank #7–8 | Strong in vision/audio tasks (e.g., browser shopping, hazard detection) |

| Official Pricing (per 1M tokens) | $0.09 input / $0.29 output | ≤256K: $1/$3; >256K: $2/$6 | $0.40 input / $2 output |

| Open-Source | Yes (MIT on HF) | No (API only) | No (API only) |

| Best For | High-volume, fast tasks | Production agents & long workflows | Vision/audio + text agents |

| Inference Speed | ~150 tokens/s | High (MTP optimized) | Multimodal latency ~2–5s |

What is MiMo V2-Omni, MiMo V2-Pro and MiMo V2-Flash

What is MiMo-V2-Flash? the efficiency-first model

MiMo-V2-Flash is the best-known earlier member of the family. On the Hugging Face model card, Xiaomi describes it as a Mixture-of-Experts model with 309B total parameters and 15B active parameters, using hybrid attention and Multi-Token Prediction to improve output speed and reduce inference cost, it was trained on 27T tokens with FP8 mixed precision, supports up to 256K context, and is optimized for high-speed reasoning and agentic workflows.

The practical takeaway is that Flash is the most balanced “everyday” MiMo model for text-heavy use cases. MiMo-V2-Flash is strong for long-context reasoning, coding help, and agent workflows, it ranks as the top #1 open-source model globally on SWE-bench Verified and SWE-bench Multilingual while costing only about 3.5% as much as Claude Sonnet 4.5. That combination makes Flash the natural starting point if you want to test the family without burning budget.

What is MiMo-V2-Pro? the flagship agent brain

MiMo-V2-Pro is the flagship text-first model in the family. Xiaomi says it has more than 1T total parameters, 42B active parameters, an expanded Hybrid Attention ratio of 7:1, and a 1M-token context window, its coding ability surpasses Claude 4.6 Sonnet, while its general agent performance on ClawEval approaches Opus 4.6. Importantly, Xiaomi says tool-call stability and accuracy have been significantly improved, which is exactly the sort of signal developers look for when moving from demos to production.

What is MiMo-V2-Omni? the multimodal agent model

MiMo-V2-Omni is Xiaomi’s multimodal answer to the agent problem. It fuses image, video, and audio encoders into a single shared backbone, so the model can see, hear, and read as one perceptual stream. Xiaomi also says it natively supports structured tool calling, function execution, and UI grounding, which is why Omni is positioned as an agent model rather than a general-purpose multimodal chatbot.

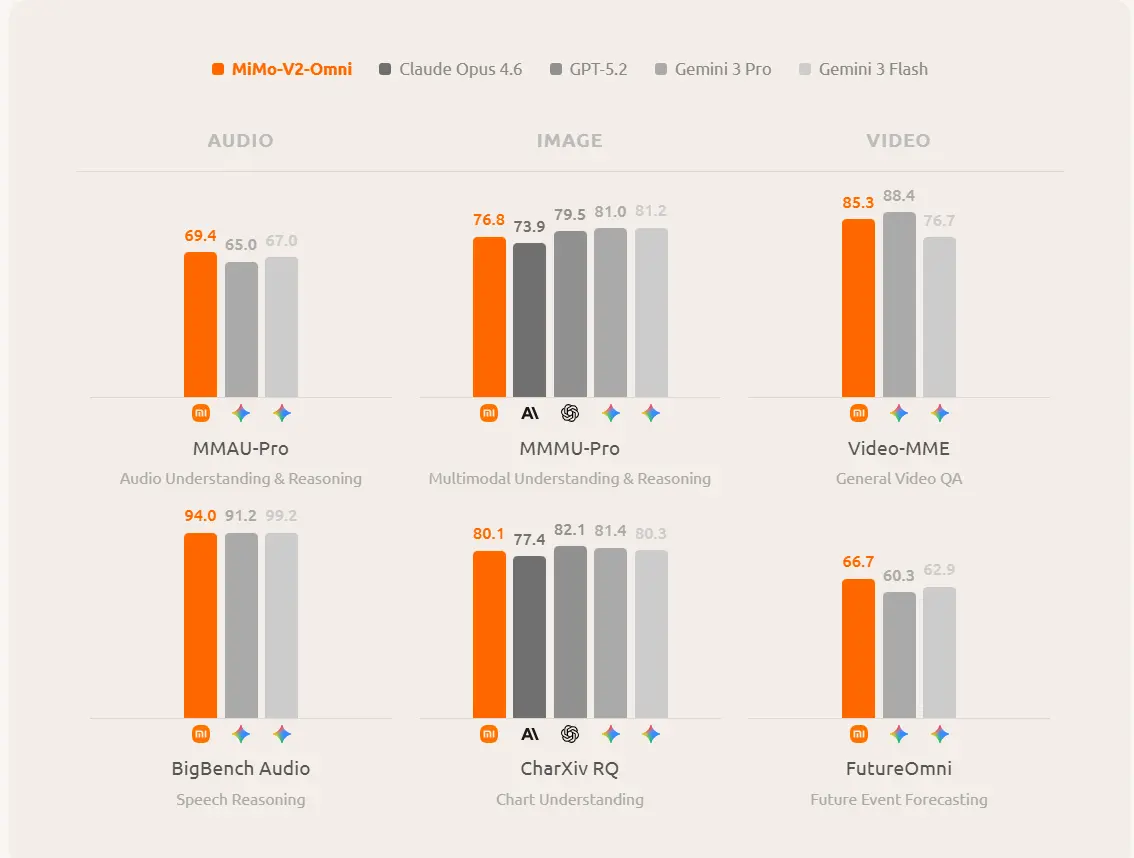

Omni goes beyond transcription in audio understanding, handling continuous audio that exceeds 10 hours, and that it outperforms Gemini 3 Pro on audio tasks while exceeding Claude Opus 4.6 on image understanding and reaching the level of top closed-source models such as Gemini 3. Omni performs strongly on browser and mobile workflows, and that its agent demos were run with OpenClaw handling browser control, file system access, and terminal interaction.

Rankable Long-Tail Keyword Insight: Developers searching “MiMo V2 Pro vs Flash for agentic coding” choose Flash for speed/cost and Pro for reliability in production.

MiMo V2 API Pricing 2026

Pricing Comparison (per 1M tokens)

| Model | Input Price | Output Price | Context Tiering Notes | Blended Cost Example (100K Input + 10K Output) |

|---|---|---|---|---|

| Flash | $0.09 – $0.10 | $0.29 – $0.30 | Flat rate | ~$0.012 – $0.013 |

| Pro | $1.00 (≤256K) $2.00 (256K–1M) | $3.00 (≤256K) $6.00 (256K–1M) | Tiered by context length; cache pricing available | ~$0.13 – $0.26 |

| Omni | $0.40 | $2.00 | Flat rate (multimodal tokens billed accordingly) | ~$0.06 |

Examples:

- Flash wins for high-volume simple tasks (e.g., 1M tokens/day costs pennies).

- Omni offers strong value for multimodal (cheaper than Gemini 3.1 equivalents).

- Pro is ~1/5–1/6 the price of Claude Sonnet 4.6 while matching or exceeding it in many agentic/coding benchmarks. Cache pricing further reduces long-context costs.

What is the price of Mimo V2 series API on CometAPI?

In CometAPI, the Mimo API offers a lower price than the official website, approximately 20% of the official price (equivalent to free). MImo-v2 pro, mimo-V2-omni, and mimo-v2-flash can also be used in openclaw.Such as:

| Comet Price (USD / M Tokens) | Official Price (USD / M Tokens) | Discount |

|---|---|---|

| Input:$0.8/MOutput:$2.4/M | Input:$1/MOutput:$3/M | 20% |

The important caveat is that “cheapest” does not always mean “best value.” Pro can be the most cost-effective choice when a single model call replaces several retries, tool calls, or human interventions. Omni can be the better bargain when multimodal grounding avoids building separate OCR, audio, and vision pipelines. Flash is the value leader when you need high volume and predictable spending.

Performance Benchmark Comparison

General Intelligence & Reasoning Benchmarks

| Benchmark | MiMo-V2-Flash | MiMo-V2-Pro | MiMo-V2-Omni | Notes / Comparison Context |

|---|---|---|---|---|

| Artificial Analysis Intelligence Index | 39–41 | 49 (Global #8, Chinese #2) | Not primary focus | Pro shows significant leap over Flash |

| AIME 2025 (Math) | 94.1% | ~94.0% | N/A | Flash highly competitive for its size |

| Hallucination Rate | ~48% | ~30% | N/A | Pro demonstrates improved reliability |

| LongBench V2 (Long Context) | 60.6 | Strong (1M context advantage) | N/A | Pro excels in ultra-long tasks |

Coding & Agentic Benchmarks

| Benchmark | MiMo-V2-Flash | MiMo-V2-Pro | MiMo-V2-Omni | Comparison Highlights |

|---|---|---|---|---|

| SWE-Bench Verified | 73.4% (Top open-source) | 78.0% | ~74.8% | Pro leads; Flash #1 among open models |

| SWE-Bench Multilingual | 71.7% | 57.1% (multilingual variant) | N/A | Flash particularly strong here |

| ClawEval (Agentic Tool Use) | 48.1 – 62.1 | 61.5 – 81.0 | 52.0 – 54.8 | Pro often matches/exceeds Claude Sonnet 4.6 in coding scenarios |

| GDPVal-AA / PinchBench | 1040 – 1426 range | 1426 | 81.2 (variant) | Pro strong in real-world agent tasks |

| OmniGAIA / Multi-Modal Agent | N/A | N/A | 54.8 | Omni competitive in multimodal agents |

Multimodal Benchmarks (Omni-Focused)

| Benchmark | MiMo-V2-Omni Score | Notable Competitors | Highlights |

|---|---|---|---|

| MMAU-Pro (Audio) | 76.8 | Claude Opus 4.6 (73.9) | Omni leads |

| BigBench Audio / Speech Reasoning | Up to 80.1 – 94.0 | Varies | Strong long-audio capability (10+ hours) |

| MMMU-Pro (Image) | 85.3 | Varies (edges some leaders) | Excellent chart & visual understanding |

| Video-MME | 94.0 | Strong vs. Gemini 3 Pro in select areas | High video event forecasting |

| CharXiv (Charts) | 66.7 | Beats Gemini 3 Pro in some reports | Solid structured visual reasoning |

Performance Comparison: Which is Better?

For reasoning and coding, Mimo-V2-Flash looks extremely strong on paper. Mimo-V2-Flash is top-tier on AIME 2025, GPQA-Diamond, SWE-bench Verified, and SWE-bench Multilingual, and Mimo-V2-Flash as the top open-source model globally on SWE-bench Verified and comparable to Claude Sonnet 4.5 while costing about 3.5% as much. That makes Flash the standout for developers who care about throughput and cost efficiency.

For pure agentic control, Pro is the flagship. Xiaomi emphasizes tool-call stability, long-horizon task planning, and production engineering workflows, with a 1M-token context window that is especially useful in large codebases, multi-document analysis, and long-running browser or tool chains.

For multimodal perception, Omni is the one that clearly changes the shape of the product. Its differentiator is not “being a little better at chat”; it is native image, video, and audio understanding combined with tool use and UI grounding. If your product needs to look at screenshots, parse charts, inspect video, listen to audio, or drive an interface, Omni is the only model in the trio that is purpose-built for that stack.

Across intelligence, coding, agentic, and multimodal metrics, the models carve distinct niches:

- Reasoning/Intelligence: Pro leads (AA Index 49); Flash competitive for size; Omni strong in cross-modal.

- Coding/Agentic: Pro often surpasses Claude Sonnet 4.6 (SWE-Bench, ClawEval); Omni close behind in multimodal agents; Flash tops open-source.

- Speed: Flash fastest due to smaller active params.

- Context: Pro dominates at 1M tokens.

- Multimodal: Omni is unmatched in the family.

Pro and Omni deliver 5–10x cost savings versus U.S. frontier models while ranking top-10 globally. Flash provides near-equivalent open-source performance at 1/10th the price of many closed models.

How Should You Choose?

Choose MiMo V2 Pro if…

you need the best shot at long-horizon, high-stakes agent work: large software tasks, deep workflow orchestration, big context windows, and robust tool use. Pro is the right pick when performance matters more than per-token cost and when the task is mostly text or structured tool interaction rather than images and audio.

Choose MiMo V2 Omni if…

your product needs multimodal perception as a first-class feature: screenshots, dashboards, photos, videos, audio, browser state, or cross-device action. Omni is the sweet spot for “see, hear, act” applications and is easier to justify than Pro if you do not need the 1M-token flagship context.

Choose MiMo V2 Flash if…

you want the best value. Flash is the best candidate for coding copilots, batch agents, high-volume support, internal automation, and experiments where open-source weights, speed, and low cost matter. It is also the easiest model in the lineup to defend in a budget review, because the published token prices are dramatically lower than the other two.

Key Differences & When Each Model Shines

| Factor | Flash (Best For) | Pro (Best For) | Omni (Best For) |

|---|---|---|---|

| Budget | Extreme low-cost / high volume | High-value reasoning | Multimodal value |

| Task Type | Simple queries, local deploy | Complex agents, coding, planning | Vision/video/audio + agents |

| Context | Medium | Longest (1M) | Medium |

| Open-Source | Yes | No | No |

| Speed | Fastest | Balanced | Balanced (multimodal overhead) |

Decision Framework

Step 1: Do you need multimodal (images/video/audio)? → Omni ($0.40/$2.00).

Step 2: Pure text + maximum reasoning/agentic power? → Pro ($1–2/$3–6).

Step 3: Budget, speed, or self-hosting critical? → Flash ($0.09/$0.29, open-source).

Hybrid Strategy (recommended by API providers): Use Flash for 80% of routine tasks, route complex reasoning to Pro, and multimodal to Omni via a single API key (e.g., via CometAPI). This optimizes cost while accessing the full family.

Final Verdict: Your Personalized Recommendation

MiMo V2 is Xiaomi’s way of saying it wants a full AI stack, not just a single hero model. Pro is the flagship reasoning engine, Omni is the multimodal operator, and Flash is the efficient open-source workhorse. The best choice depends less on raw benchmark bragging and more on your workload shape: text-heavy agents point to Flash or Pro, multimodal systems point to Omni, and giant-context production workflows point to Pro.

The MiMo V2 family proves high-performance AI no longer requires premium Western pricing. Start with Flash or Omni for most users, scale to Pro as needs grow, and monitor Xiaomi’s roadmap for even more breakthroughs.

Ready to test? Access all three via platforms like CometAPI with one key. Experiment today—the right choice could transform your AI productivity overnight.