MiMo-V2-Omni Overview

MiMo-V2-Omni is Xiaomi MiMo’s omni foundation model for the API platform, built to see, hear, read, and act in the same workflow. Xiaomi positions it as a multimodal agent model that combines image, video, audio, and text understanding with structured tool calling, function execution, and UI grounding.

Technical specifications

| Item | MiMo-V2-Omni |

|---|---|

| Provider | Xiaomi MiMo |

| Model family | MiMo-V2 |

| Modality | Image, video, audio, text |

| Output type | Text |

| Native audio support | Yes |

| Native audio-video joint input | Yes |

| Structured tool calling | Yes |

| Function execution | Yes |

| UI grounding | Yes |

| Long audio handling | Over 10 hours continuous audio understanding |

| Release date | 2026-03-18 |

| Public numeric context length | Not stated on the official Omni page |

What is MiMo-V2-Omni?

MiMo-V2-Omni is designed for agentic systems that need perception and action in one model. Xiaomi says the model fuses dedicated image, video, and audio encoders into one shared backbone, then trains it to anticipate what should happen next rather than only describe what is already visible.

Main features of MiMo-V2-Omni

- Unified multimodal perception: image, video, audio, and text are handled as one perceptual stream rather than separate add-ons.

- Agent-ready outputs: the model natively supports structured tool calling, function execution, and UI grounding for real agent frameworks.

- Long-form audio understanding: Xiaomi claims it can handle continuous audio longer than 10 hours, which is unusually strong for a general omni model.

- Native audio-video reasoning: the official page highlights joint audio-video input for video comprehension instead of a text-only transcript pipeline.

- Browser and workflow execution: Xiaomi demonstrates end-to-end browser shopping and TikTok upload flows using MiMo-V2-Omni plus OpenClaw.

- Perception-to-action framing: the model is trained to connect what it sees with what it should do next, which is the core difference between a demo model and an agentic model.

Benchmark performance

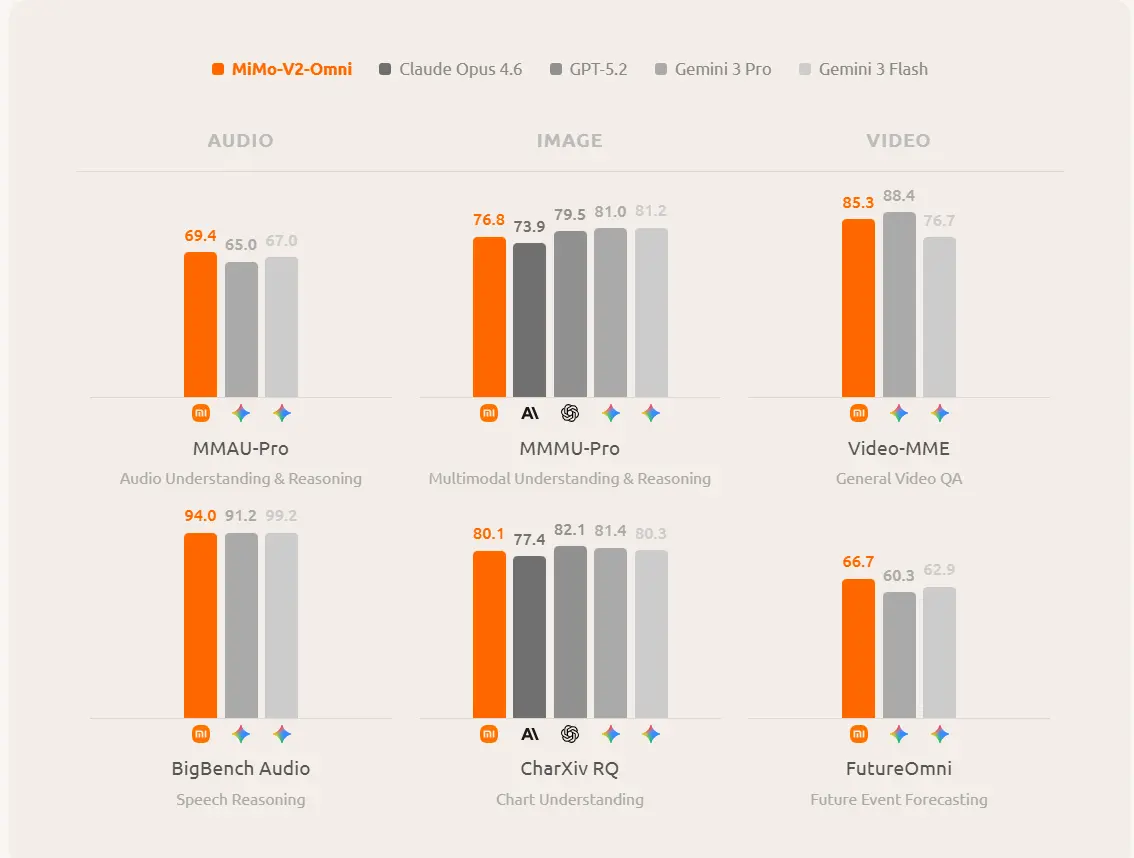

It clearly states that Omni exceeds Gemini 3 Pro on audio understanding, exceeds Claude Opus 4.6 on image understanding, and performs on par with the strongest reasoning models on agentic productivity benchmarks.

MiMo-V2-Omni vs MiMo-V2-Pro vs MiMo-V2-Flash

| Model | Core strength | Context / scale | Best fit |

|---|---|---|---|

| MiMo-V2-Omni | Multimodal perception + agent action | Public context length not stated on the Omni page | Audio, image, video, UI, and browser agents |

| MiMo-V2-Pro | Largest flagship agent model | Up to 1M-token context; 1T+ params, 42B active | Heavy agent orchestration and long-horizon work |

| MiMo-V2-Flash | Fast reasoning and coding | 256K context; 309B total, 15B active | Efficient reasoning, coding, and high-throughput agent tasks |

Best use cases

MiMo-V2-Omni is the right pick when your workflow depends on non-text inputs or outputs: screen understanding, voice and audio analysis, video review, browser automation, multimodal assistants, and robotics-style agent loops. If your workload is mostly text-only and you care more about raw speed or maximum context, the sibling Pro and Flash models are the more obvious alternatives.