Technical specifications (quick reference table)

| Item | Qwen3.5-122B-A10B | Qwen3.5-27B | Qwen3.5-35B-A3B | Qwen3.5-Flash (hosted) |

|---|---|---|---|---|

| Parameter scale | ~122B (medium-large) | ~27B (dense) | ~35B (MoE / A3B hybrid) | Corresponds to 35B-A3B weights (hosted) |

| Architecture notes | Hybrid (gated delta + MoE attention in family) | Dense transformer | Sparse / Mixture-of-Experts variant (A3B) | Same architecture as 35B-A3B, production features |

| Input / output modalities | Text, vision-language (early fusion multimodal tokens); chat-style I/O | Text, V+L support | Text + vision (agentic tool calls supported) | Text + vision; official tool integrations & API outputs |

| Default maximum context (local / standard) | Configurable (large) — family supports very long contexts | Configurable | 262,144 tokens (standard local config example) | 1,000,000 tokens (default for hosted Flash). |

| Serving / API | Compatible with OpenAI-style chat completions; vLLM / SGLang / Transformers recommended | Same | Same (example CLI / vLLM commands in model card) | Hosted API (Alibaba Cloud Model Studio / Qwen Chat); additional production observability & scaling. |

| Typical use cases | Agents, reasoning, coding assistance, long-document tasks, multimodal assistants | Lightweight / single-GPU inference, agentic tasks with smaller footprint | Production agent deployments, long-context multimodal tasks | Production agent SaaS: long context, tool use, managed inference |

What is Qwen-3.5 Flash

Qwen-3.5 Flash is the production / hosted offering of the Qwen3.5 family that maps to the 35B-A3B open weight but adds production capabilities: extended default context (advertised at up to 1M tokens for the hosted product), official tool integrations, and managed inference endpoints to simplify agentic workflows and scaling. In short: Flash = the cloud-hosted, production-ready 35B A3B variant with extra engineering for long-context, tool usage, and throughput.

The Qwen-3.5 Flash Series is part of the broader Qwen 3.5 “Medium model series”, which includes multiple models like:

- Qwen3.5-Flash

- Qwen3.5-35B-A3B

- Qwen3.5-122B-A10B

- Qwen3.5-27B

Within this lineup, Qwen3.5-Flash is the production API version—essentially the fast, deployable version of the 35B model optimized for developers and enterprises. 👉 Flash is essentially the “enterprise runtime layer” built on top of the 35B-A3B model.

Main features of Qwen-3.5 Flash

- Unified vision-language foundation — trained with early fusion multimodal tokens so text and images are processed in a coherent stream (improves reasoning and visual agentic tasks).

- Hybrid / efficient architecture — gated delta networks + sparse Mixture-of-Experts (MoE) patterns in some sizes (A3B denotes a sparse variant), giving a tradeoff of high capability per compute.

- Long-context support — the family supports very long local contexts (example configs show up to 262,144 tokens locally) and the Flash hosted product defaults to a 1,000,000-token context for production workflows. This is tuned for agentic chains, document QA, and multi-document synthesis.

- Agentic tool use — native support and parsers for tool-calls, reasoning pipelines, and “thinking” or speculative sampling that enable the model to plan and call external APIs or tools in a structured fashion.

Benchmark performance of Qwen-3.5 Flash

| Benchmark / Category | Qwen3.5-122B-A10B | Qwen3.5-27B | Qwen3.5-35B-A3B | (Flash aligns w/ 35B-A3B) |

|---|---|---|---|---|

| MMLU-Pro (knowledge) | 86.7 | 86.1 | 85.3 (35B) | Flash ≈ 35B-A3B published profile. |

| C-Eval (Chinese exam) | 91.9 | 90.5 | 90.2 | |

| IFEval (instruction following) | 93.4 | 95.0 | 91.9 | |

| AA-LCR (long context reasoning) | 66.9 | 66.1 | 58.5 | (local configs show long-context setups up to 262k tokens; Flash advertises 1M default). |

Summary: the Qwen3.5 medium and smaller variants (e.g., 27B, 122B A10B) narrow the gap to frontier models on many knowledge and instruction benchmarks, while the 35B-A3B (and Flash) aim for production tradeoffs (throughput + long context) with competitive MMLU/C-Eval scores relative to larger models.

🆚 How Qwen-3.5 Flash Fits in the Qwen 3.5 Family

Think of the series like this:

| Model | Role |

|---|---|

| Qwen3.5-Flash | ⚡ Fast production API |

| Qwen3.5-35B-A3B | 🧠 Core balanced model |

| Qwen3.5-122B-A10B | 🏆 Higher reasoning power |

| Qwen3.5-27B | 💻 Smaller, efficient local model |

👉 Flash = same intelligence tier as 35B, but optimized for deployment.

When to Use Qwen-3.5 Flash

Use it if you need:

- Real-time AI (chatbots, assistants)

- AI agents with tools (search, APIs, automation)

- Large document or code analysis

- High-scale production APIs

How to access Qwen-3.5 Flash API

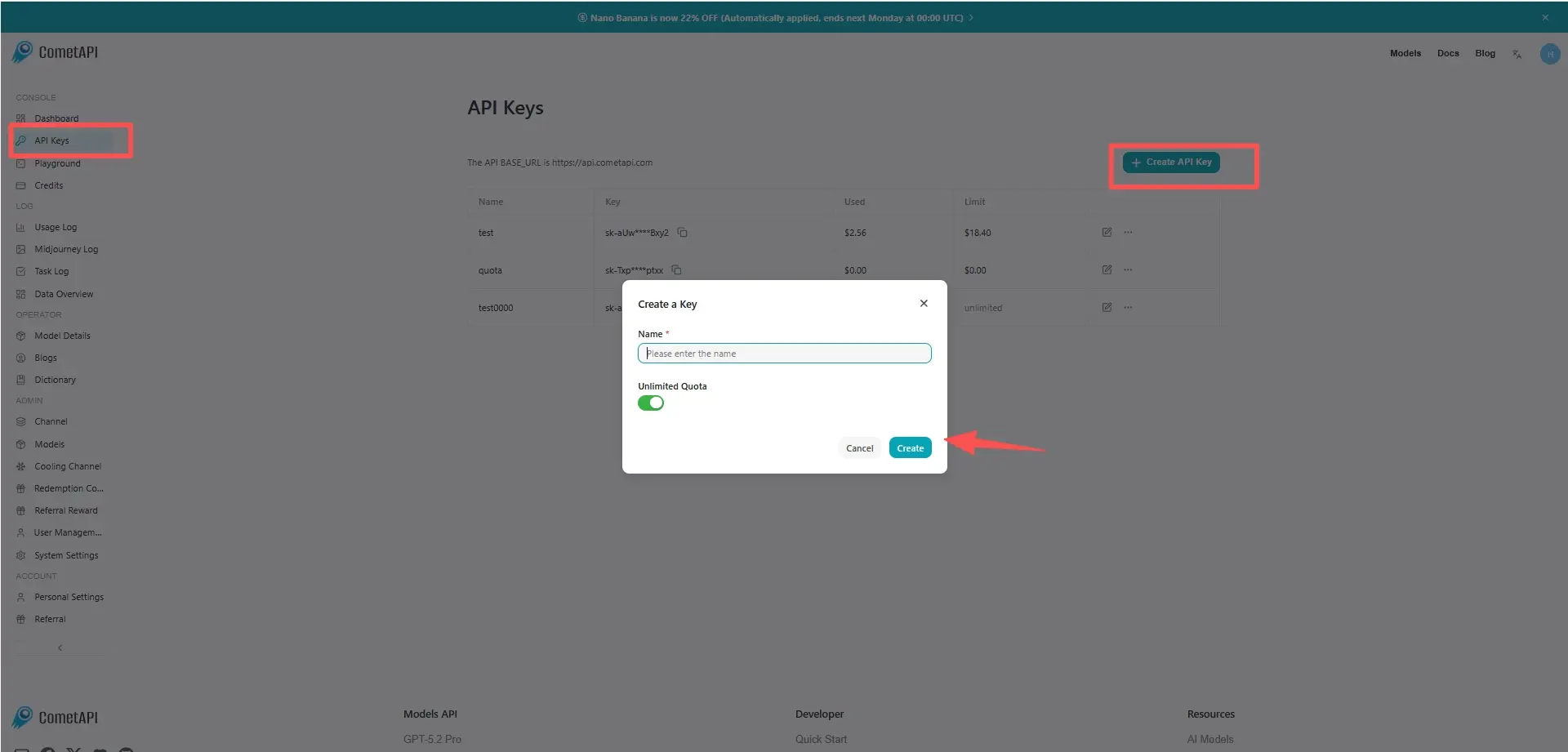

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to Qwen-3.5 Flash API

Select the “qwen3.5-flash” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account. base url is Chat Completions

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.