Technical specifications of GPT-5.3 Codex

| Item | GPT-5.3 Codex (public specs) |

|---|---|

| Model family | GPT-5.3 (Codex variant — agentic coding optimized) |

| Input types | Text, Code, Tool/terminal context, (limited) Vision via Codex app interfaces |

| Output types | Text (natural language, code, patches, shell commands), structured logs, test results |

| Long‑context handling | Compaction triggers every 100,000 tokens during long sessions (reported in system card) |

| Release / publication date | February 5, 2026 (OpenAI announcement & system card) |

What is GPT-5.3 Codex

GPT‑5.3 Codex is OpenAI’s flagship agentic coding model tuned for long-horizon software engineering, tool-driven workflows, and high-fidelity security research/defensive workflows. It combines GPT‑5.2 Codex’s coding strengths with improved reasoning, longer-running task reliability, and additional safety controls tailored for cyber and dual‑use domains.

Main Features of GPT-5.3 Codex

🧪 Frontier Coding Capabilities

- State-of-the-art results on industry coding benchmarks like SWE-Bench Pro and Terminal-Bench 2.0—including higher efficiency and language diversity.

- Designed for complex development workflows like multi-day builds, tests, refactoring, deployment, and debugging.

🛠️ Professional Workflow Integration

- Executes tasks that involve research, tool invocation, and complex execution end-to-end such as building web games, desktop apps, analyses, and more.

- Web development improvements: Better “default sensible outputs” for common coding prompts, and automated UX enhancements in generated code.

📊 Broad Domain Work

- Performs in knowledge work benchmarks like GDPval, matching the performance of GPT-5.2 in professional productivity tasks across 44 careers.

- Shows strong desktop computing capability measured by OSWorld-Verified, which evaluates visual desktop task performance approaching human baselines.

🔐 Cybersecurity Readiness

- First Codex to be classified as High capability in cybersecurity tasks under OpenAI’s Preparedness Framework.

Benchmark Performance (Selected Metrics)

| Benchmark | GPT-5.3 Codex | GPT-5.2 Codex | GPT-5.2 |

|---|---|---|---|

| SWE-Bench Pro | 56.8 % | 56.4 % | 55.6 % |

| Terminal-Bench 2.0 | 77.3 % | 64.0 % | 62.2 % |

| OSWorld-Verified | 64.7 % | 38.2 % | 37.9 % |

| GDPval (wins/ties) | 70.9 % | – | 70.9 % |

| Cybersecurity CTF | 77.6 % | 67.4 % | 67.7 % |

| SWE-Lancer IC Diamond | 81.4 % | 76.0 % | 74.6 % |

Benchmarks show GPT-5.3 Codex outperforming previous models across coding, agentic and real-world productivity tasks.

GPT-5.3 Codex vs GPT-5.2-Codex vs Competitors

| Feature | GPT-5.3-Codex | GPT-5.2-Codex | Claude Opus 4.6 |

|---|---|---|---|

| Coding Performance | ⚡ Industry-leading | High | Moderate-High |

| Contextual Reasoning | Strong | Moderate | Strong |

| Long Tasks | Excellent | Good | Very strong |

| Agentic Computer Use | Excellent | Moderate | Not central |

| Cybersecurity Tasks | High | Moderate | Not prominently reported |

| Real-time steering | Yes | Limited | Not specified |

Note on Claude Opus 4.6: launched on the same day, targeting general workflows and coding enhancement with expanded context support, but not optimized explicitly for agentic computing like GPT-5.3 Codex.

Representative enterprise use cases

Repository-scale refactorings and automated PR generation with test and validation loops.

Assisted vulnerability triage, reverse engineering, and defensive research within a Trusted Access program.

CI/CD orchestration and automated regression testing with human-in-the-loop verification.

Design → prototype workflows translating requirements into multi-file scaffolds and test harnesses.

How to access GPT-5.3 Codex API

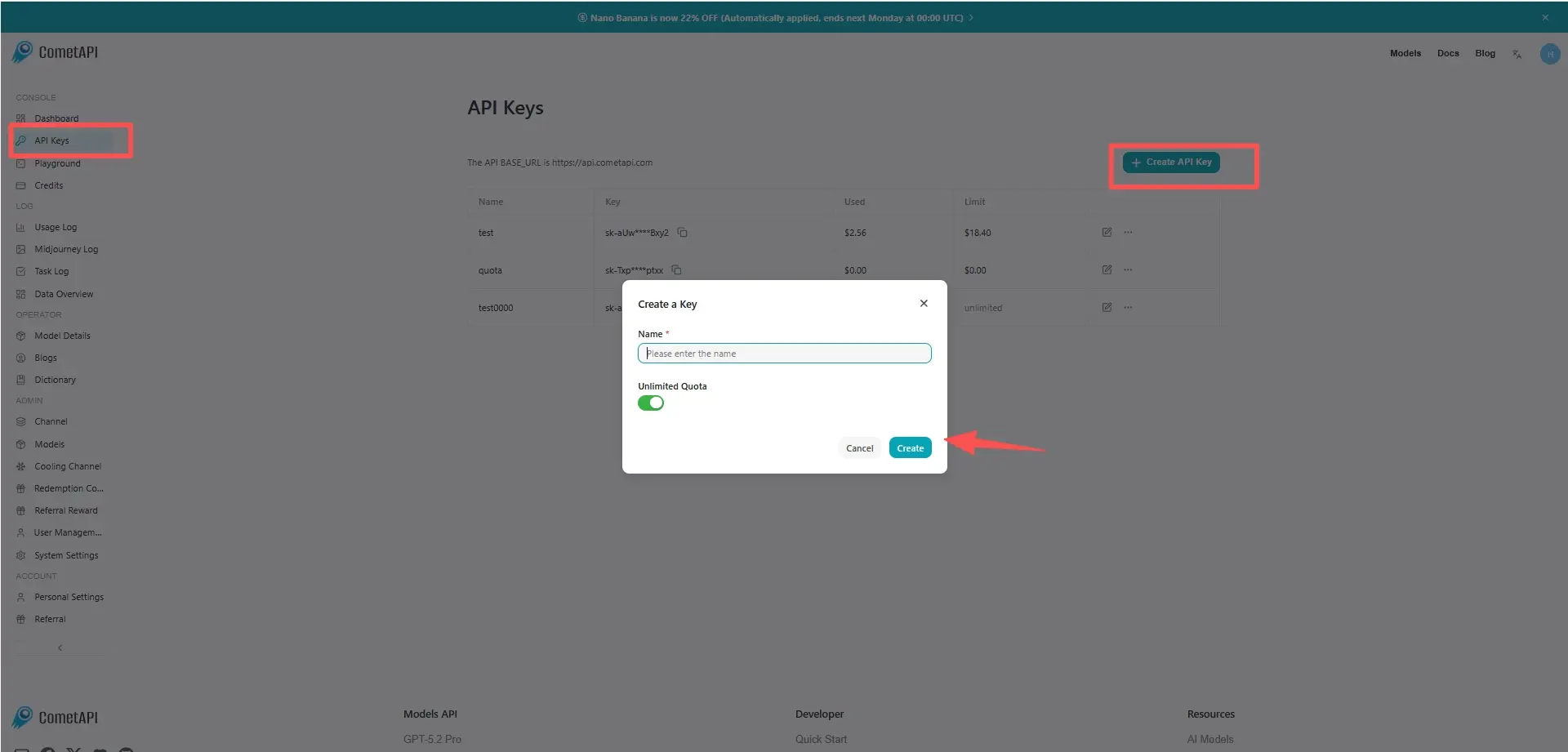

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to GPT-5.3 Codex API

Select the “gpt-5.3-codex” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account. base url is Responses

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.