Technical Specifications of GPT-5.4 Nano

| Item | GPT-5.4 Nano (estimated from official + cross-validation) |

|---|---|

| Model family | GPT-5.4 series (ultra-lightweight “nano” variant) |

| Provider | OpenAI |

| Input types | Text |

| Output types | Text |

| Context window | 128,000 – 200,000 tokens (range based on nano tier patterns) |

| Max output tokens | 32,000 – 64,000 tokens (estimated) |

| Knowledge cutoff | ~May 31, 2024 (inherited mini/nano lineage) |

| Reasoning support | Limited (optimized for efficiency over depth) |

| Tool support | Basic function calling (limited agent capabilities) |

| Positioning | Ultra-low-cost, high-throughput inference model |

What is GPT-5.4 Nano?

GPT-5.4 Nano is the smallest and most cost-efficient model in the GPT-5.4 family, designed for massive-scale, low-compute workloads. It prioritizes speed, throughput, and cost efficiency over deep reasoning, making it ideal for simple, repeatable tasks.

Unlike GPT-5.4 or GPT-5.4 Mini, Nano is optimized for high-frequency API usage, where millions of requests must be processed quickly and cheaply.

Key Features of GPT-5.4 Nano

- Ultra-low latency inference: Designed for real-time pipelines and high-QPS systems

- Extreme cost efficiency: Ideal for large-scale deployments (classification, tagging, routing)

- Lightweight reasoning: Handles simple instructions reliably but not deep chains

- High throughput optimization: Built for batch processing and parallel workloads

- Stable structured output: Works well for JSON formatting, extraction, and labeling tasks

- Pipeline-friendly design: Commonly used as a “worker model” in multi-model architectures

Benchmark Performance of GPT-5.4 Nano

- Not positioned for frontier benchmarks (e.g., SWE-Bench, GPQA)

- Optimized for:

- Classification accuracy consistency

- Structured output reliability

- Latency benchmarks (substantially faster than Mini/Pro tiers)

- Typically achieves high precision on narrow tasks but significantly lower performance on reasoning-heavy benchmarks

👉 If you're wondering whether to use the GPT-5.4 Nano or Mini, the key difference is: GPT-5.4 Nano excels in efficiency benchmarks, not reasoning leaderboards.

GPT-5.4-Nano vs Other Models

| Model | Strength | Context Window | Best Use Case |

|---|---|---|---|

| GPT-5.4 | Maximum intelligence | ~1M tokens | Complex reasoning, research |

| GPT-5.4 Mini | Balanced performance + speed | ~400K tokens | Coding, agents |

| GPT-5.4 Nano | Fastest + cheapest | ~400K tokens | Classification, extraction |

| GPT-5 Nano | Older nano baseline | ~400K tokens | Basic NLP tasks |

👉 Key takeaway:

- Use Nano for scale

- Use Mini for balanced intelligence

- Use Full/Pro for complex reasoning

Limitations of GPT-5.4 Nano

- Poor performance on multi-step reasoning or complex logic tasks

- Limited effectiveness in code generation or advanced analysis

- Reduced multimodal capability (primarily text-focused)

- Not suitable for decision-critical or high-accuracy reasoning tasks

Representative Use Cases

- Text classification & tagging — sentiment, categories, moderation

- Data extraction pipelines — structured JSON output at scale

- Routing & orchestration — decide which model/tool to call next

- Search indexing & preprocessing — chunk labeling, metadata generation

- High-volume automation tasks — millions of lightweight API calls

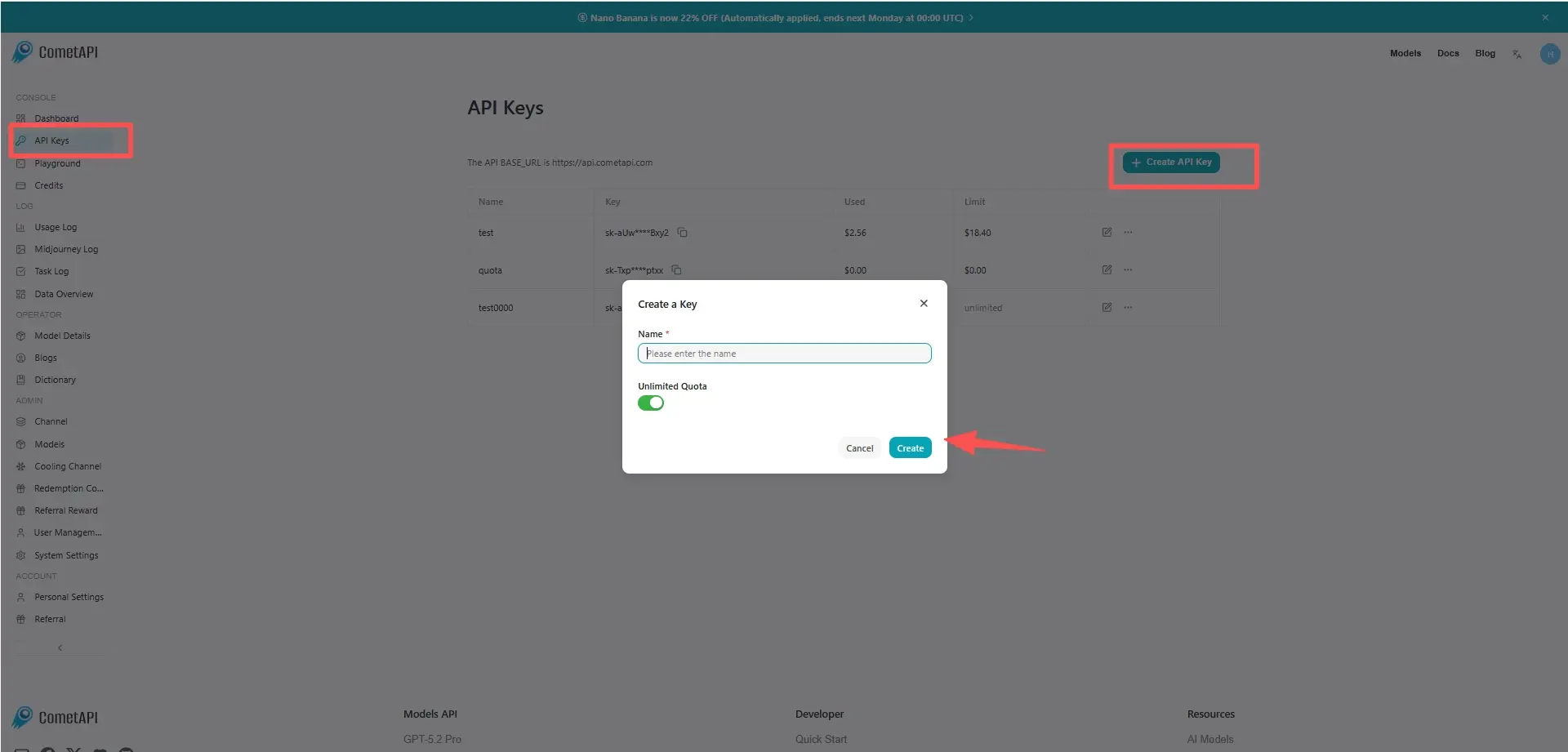

How to access GPT-5.4 Nano API

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to GPT-5.4 Nano API

Select the “gpt-5.4-nano” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account. base url is Chat Completions and Responses.

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.

.png)