What is DeepSeek-Reasoner?

DeepSeek-Reasoner is the reasoning (or “thinking”) mode/API name for DeepSeek’s reasoning-first models (currently aligned to the DeepSeek-V3.2 family). It is designed to produce an explicit chain of thought (CoT) before emitting a final answer—i.e., the model intentionally generates internal step-by-step reasoning which is exposed (or can be exposed) through the API so callers can inspect or distill it. DeepSeek positions the reasoner variant as the “thinking” counterpart to its non-thinking chat model and markets it for multi-step reasoning, math, coding and agent workflows.

Main features (user-facing)

- Explicit Chain-of-Thought (CoT) output. API returns a separate

reasoning_contentfield containing the model’s internal stepwise reasoning alongside the finalcontent. This is designed for inspectability and downstream agent logic. - “Thinking” vs “Chat” modes.

deepseek-reasoner(thinking mode) is distinct fromdeepseek-chat(non-thinking mode); both were upgraded to the V3.2 generation. - Large context windows. DeepSeek exposes very large context lengths . The Reasoner variants are marketed for long-form reasoning and agent memory.

- JSON output / structured responses. Support for structured JSON outputs useful for programmatic consumption.

- Agent/agent-builder focus. V3.2 and the Speciale variant are explicitly described as “reasoning-first models built for agents.”

Technical capabilities

- Inputs: plain text prompts, structured JSON for tool/agent calls, files or long documents (via long context); tokens are standard NLP tokens.

- Outputs: API returns both

reasoning_content(CoT text) andcontent(final answer). API clients can request only CoT or only final answer by adjusting max_tokens or response parameters. (Practical note: extracting CoT may still be billable as model output.) - DeepSeek has iterated via a reasoning-specialized roadmap: base large models (R1 family) followed by focused post-training / reinforcement learning (RLHF-style) and policy-style fine-tuning to improve reasoning depth. The team also uses distillation to compress reasoning capability into smaller, deployable models.

- The V3.2 series adds agentic post-training for tool-use, hybrid inference (Think / Non-Think), and optimizations for faster “thinking” iterations.

- Inference efficiency is aided by a sparse attention method (reports call it DeepSeek Sparse Attention — DSA) that focuses compute on relevant segments rather than full dense attention across very long sequences; this reduces cost for very long contexts.

How to access deepseek-reasoner API

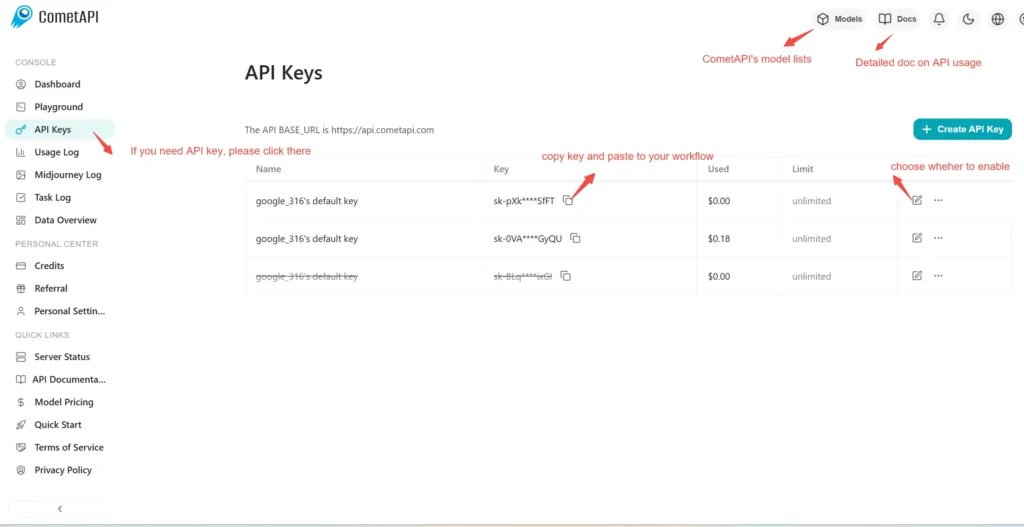

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to deepseek-reasoner API

Select the “deepseek-reasoner” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account. base url is Chat format.

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.