Quick Answer: Which AI model should developers prioritize in 2026?

For tasks requiring maximum autonomous reasoning and minimal hallucination, developers should choose GPT-5.5 (xhigh), which leads the market with an Intelligence Index of 60. Applications demanding real-time interactivity should utilize Mercury 2, the current speed leader with approximately 859 tokens per second. For large-scale production where budget is a primary constraint, DeepSeek V4 Pro and Kimi K2.6 offer near-frontier intelligence at roughly 10% of the cost of flagship proprietary models.

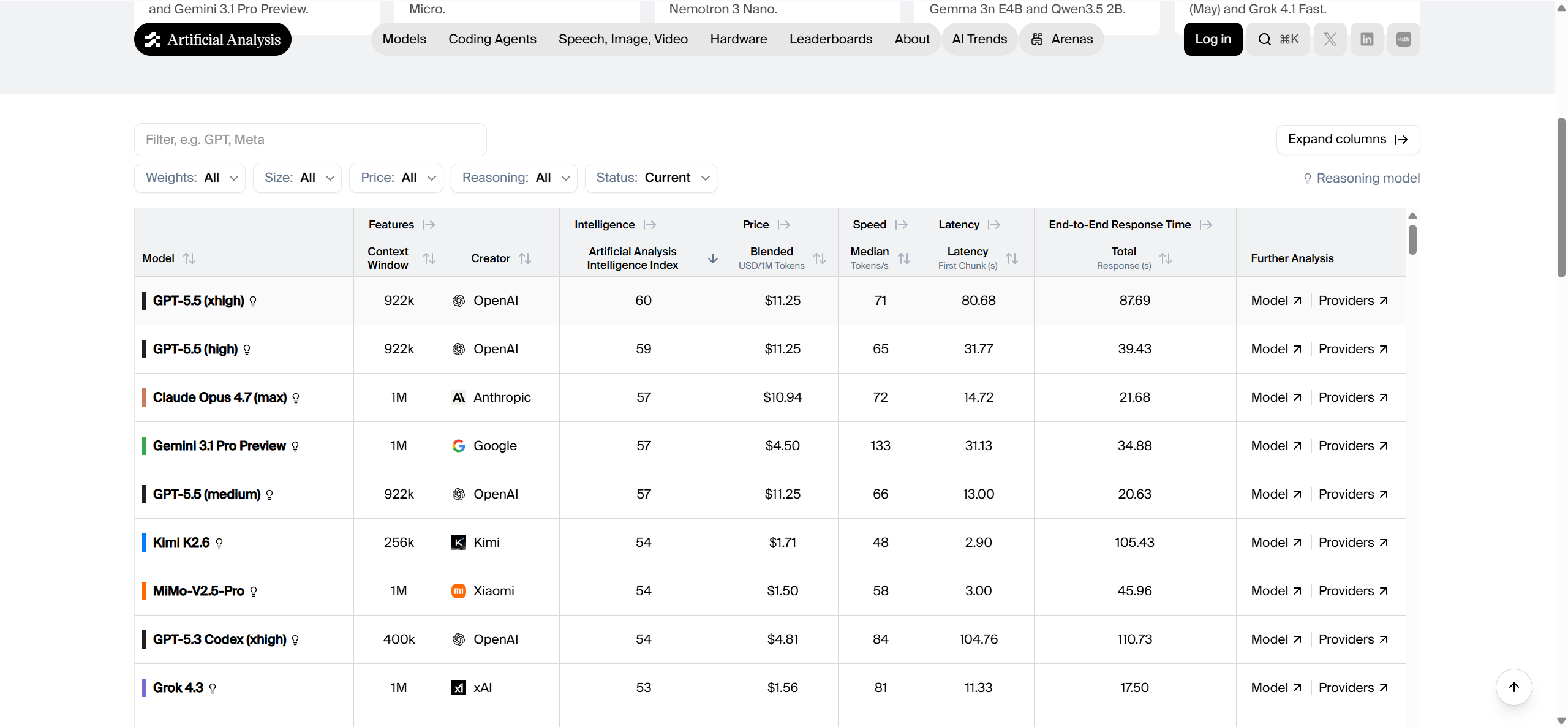

The Intelligence Index: Ranking the Frontier Models

The 2026 AI landscape has shifted from chasing parameter counts to optimizing "thinking" density. The Artificial Analysis Intelligence Index v4.0 serves as the industry standard for quantifying model capability across ten specialized dimensions, including professional-grade coding and extreme logical deduction.

| Model | Intelligence Index | Context Window | Best Use Case |

|---|---|---|---|

| GPT-5.5 (xhigh) | 60 | 922K | Scientific research and logic |

| GPT-5.5 (high) | 59 | 922K | Professional-grade coding |

| Claude Opus 4.7 (max) | 57 | 1M | Autonomous agents and planning |

| Gemini 3.1 Pro | 57 | 1M - 2M | Multimodal data synthesis |

| Kimi K2.6 | 54 | 256K | Terminal-based agentic work |

| MiMo-V2.5-Pro | 54 | 1M | Full-stack software engineering |

| DeepSeek V4 Pro (Max) | 52 | 1M | Scalable reasoning workflows |

| GLM-5.1 | 51 | 200K | Long-horizon autonomous tasks |

How to read this table

Of the top five models, three are GPT-5.5 models, GPT-5.5 Medium, Claude Opus 4.7, and Gemini 3.1 Pro. These three Western flagship models are neck and neck, while the Kimi K2 and mimo-v2.5 pro, two Chinese models, offer performance comparable to top Western models at extremely competitive prices.

The Artificial Analysis Intelligence Index is a normalized metric derived from independent evaluations such as Terminal-Bench Hard and IFBench. A single point of difference represents a statistically significant gap in a model's "autonomy threshold." For example, the 3-point gap between GPT-5.5 (60) and Claude Opus 4.7 (57) often translates to the difference between a model that requires human intervention every few steps versus one that can complete a complex logic chain independently. A higher index score is generally associated with higher success rates in "Humanity's Last Exam" and reduced tool-calling errors in agentic environments.

The Reflexes: Latency and Generation Speed

For interactive software—from live IDE assistants to customer-facing voice agents—raw intelligence is secondary to Time to First Token (TTFT) and Generation Throughput.

Top 5 fastest models (throughput)

Throughput measures the speed at which a model generates text after the initial processing phase. High throughput is essential for long-form content generation and rapid code refactoring.

- Mercury 2: Approximately 859 tokens/s

- Granite 4.0 H Small: Approximately 407 tokens/s

- Granite 3.3 8B: Approximately 365 tokens/s

- Gemini 3.1 Flash-Lite**** : Approximately 331 tokens/s

- Qwen3.5 0.8B: Approximately 287 tokens/s

Top 5 lowest latency models (TTFT)

Latency indicates the delay before the first token reaches the user. This is the critical metric for "vibe" and perceived responsiveness in UI/UX.

- NVIDIA Nemotron 3 Nano: Approximately 0.40s

- Ministral 3 3B: Approximately 0.47s

- Qwen3.5 0.8B: Approximately 0.52s

- LFM2 24B A2B: Approximately 0.55s

- Grok 3 mini Reasoning: Approximately 0.58s

How to Choose Your Model in 2026

Selecting a model requires balancing the "Intelligence-per-Dollar" ratio with the specific uptime requirements of your application. The market in 2026 has diverged into three distinct architectural paths.

Independent developers and budget-sensitive teams

For solo developers or small teams running thousands of experimental agent loops, DeepSeek V4 Pro is the optimal strategic choice. It utilizes a massive 1.6T parameter Mixture-of-Experts (MoE) architecture where only 49B parameters are activated per token, allowing it to deliver flagship performance at approximately $0.416 per million tokens. Another excellent option for coding-specific tasks is Kimi K2.6, which specializes in terminal-first workflows. These models provide nearly 90% of the reasoning power of premium models while being approximately 70-80% cheaper, effectively extending a startup's runway.

Enterprise production environments

For firm-wide deployments where stability and adherence to complex system prompts are non-negotiable, the industry standard remains GPT-5.5 Pro and Claude Opus 4.7. GPT-5.5 Pro is engineered for high-stakes precision, excelling in areas like investment banking modeling and scientific exploration where the cost of an error outweighs the cost of the API call. Claude Opus 4.7 is preferred by teams requiring sustained reliability in multi-day projects, as it shows a significantly lower hallucination rate in terminal environments compared to the broader GPT family. Enterprises typically use CometAPI to integrate these models through a single gateway, ensuring 99.9% uptime and immediate failover if a primary provider experiences regional latency spikes.

Real-time interactive applications

Applications such as real-time customer support bots or instant video captioning require "fluid" AI that feels instantaneous. In this category, Mercury 2 and Gemini 3.1 Flash-Lite are the superior choices. Mercury 2 offers throughput nearly ten times faster than standard reasoning models, making it ideal for real-time document drafting. Gemini 3.1 Flash-Lite provides a balanced multimodal capability, processing text, audio, and images within a unified context at approximately 2.5x the speed of earlier generations, all while supporting a 1-million-token context window.

Context Window: From Snippets to Entire Repositories

The context window acts as the "short-term memory" of the model. In 2026, the industry has split between standard windows (128K) and repository-scale capacities (1M-10M).

- Llama 4 Scout: 10,000,000 tokens

- Grok 4.20: 2,000,000 tokens

- Gemini 3.1 Pro: Approximately 1,048,576 tokens

- DeepSeek V4 Pro: 1,000,000 tokens

- GPT-5.5 Pro: 1,050,000 tokens

When does context size matter?

A 128K context window—standard for models like DeepSeek-V3.2—is now the baseline for basic conversational chat and summarizing individual articles. However, professional software engineering requires "whole-system" awareness.

A 1-million-token window allows an AI agent to ingest an entire software repository, including all source files, documentation, and historical logs, in a single forward pass. This prevents the "memory drift" associated with traditional RAG systems where relevant data might be missed during chunking. A concrete example is a codebase refactor: a model with 1M tokens can understand how a change in a core database schema affects fifty different API endpoints across separate files, whereas a smaller model might only "see" a few files at a time, leading to broken dependencies.

Economic Comparison: Unit Price per 1 Million Tokens

The following table uses a Blended USD/1M Tokens metric, assuming a 3:1 ratio of input-to-output tokens to reflect real-world usage patterns.

| Model | Blended Price (per 1M) | Relative Value | Discount via CometAPI |

|---|---|---|---|

| GPT-5.5 (xhigh) | Approximately $11.25 | Premium | 20% OFF |

| Claude Opus 4.7 (max) | Approximately $10.00 | High | 20% OFF |

| Gemini 3.1 Pro | Approximately $4.50 | Balanced | 20% OFF |

| Kimi K2.6 | Approximately $1.71 | High-Value | 20% OFF |

| DeepSeek V4 Pro | Approximately $0.53 | Extreme-Value | 20% OFF |

| Qwen3.5 0.8B | Approximately $0.02 | Utility | 20% OFF |

All rates verified as of May 2026. Official vendor rates are typically 20% higher than the discounted rates provided through unified gateways.

Cost Optimization Strategy

To assist architectural planning, we have estimated monthly expenditures for three common growth tiers.

- Small developer team (10M tokens/month): Teams primarily using Kimi K2.6 for feature builds and DeepSeek V4 Flash for simple logic will see a monthly expenditure in the range of $15 to $40. This allows for aggressive prototyping with a financial burden no larger than a standard SaaS subscription.

- Mid-sized SaaS (100M tokens/month): A startup scaling an AI-driven automation platform using Claude Sonnet 4.6 and Gemini 3.1 Flash can expect monthly costs between $250 and $550. By utilizing prompt caching available on these models, the effective cost often drops by an additional 15%.

- Large enterprise (1B tokens/month): Global firms running high-concurrency agentic workflows with GPT-5.5 and Claude Opus 4.7 will likely spend in the range of $3,000 to $6,500 monthly. At this scale, integrating via a unified API gateway becomes essential for centralized billing and avoiding the overhead of managing separate contracts with multiple vendors.

Conclusion: Choosing Your Path in 2026

The era of the "all-purpose model" is over. Modern AI architecture requires orchestrating a fleet of specialized models: GPT-5.5 for high-compute reasoning, Mercury 2 for interactivity, and DeepSeek V4 for high-volume execution. By integrating once with CometAPI, developers gain the portability to swap models as benchmarks evolve while securing a permanent 20-40% discount on every request.

FAQ

Which AI model is currently the most intelligent?

According to the Artificial Analysis Intelligence Index v4.0, GPT-5.5 (xhigh) is the most intelligent model currently available, with a score of 60. It is followed closely by GPT-5.5 (high) at 59 and Claude Opus 4.7 (max) at 57.

What is the fastest AI model for real-time applications?

Mercury 2 is the speed champion of 2026, delivering approximately 859.1 tokens per second. For low latency (TTFT), NVIDIA Nemotron 3 Nano leads with a response time of approximately 0.40 seconds.

How high does an Intelligence Index score need to be for production agents?

For basic automation or classification, a score between 30 and 40 (like GPT-5.4 nano) is often sufficient. However, for "Agentic Engineering" where the AI manages codebases or entire browser sessions, a score above 54 (such as Kimi K2.6 or GPT-5.5) is recommended to ensure consistency in long-horizon planning.

With similar pricing, should I choose GPT-5.5 or Claude Opus 4.7?

If your workflow involves terminal execution and "Vibe Coding," GPT-5.5 generally excels in those specific benchmarks. However, if you require extreme consistency for professional writing, legal research, or multi-day agent cycles with low hallucination rates, Claude Opus 4.7 is the documented leader in those categories.

What is the actual performance gap between open-weights (DeepSeek) and proprietary models?

In 2026, the gap has narrowed to approximately 10-15% in raw reasoning benchmarks. While proprietary flagships like GPT-5.5 (xhigh) still lead in "peak" logic (Index 60), open-weight models like DeepSeek V4 Pro (Index 52) and Kimi K2.6 (Index 54) provide over 85% of the capability at roughly 1/10th the cost.

How can I reduce my overall API costs for these models?

Using a unified API layer like CometAPI allows you to access the entire catalog at rates 20% to 40% lower than official vendor pricing through bulk purchasing and intelligent path routing.

Which model has the largest context window for long documents?

Llama 4 Scout currently supports the largest context window in the market at 10 million tokens. Grok 4.20 follows with 2 million tokens, while GPT-5.5 Pro, Gemini 3.1 Pro, and DeepSeek V4 Pro all support approximately 1 million tokens.

Is there a way to test these benchmarks without a high initial cost?

Yes. You can sign up for a free account at CometAPI to receive test credits with no credit card required, allowing you to run comparative performance tests across over 500 models in the built-in Playground.