Key features (quick list)

- Two model variants:

grok-4-fast-reasoningandgrok-4-fast-non-reasoning(tunable for depth vs. speed). - Very large context window: up to 2,000,000 tokens, enabling extremely long documents / multi-hour transcripts / multi-document workflows.

- Token efficiency / cost focus: xAI reports ~40% fewer thinking tokens on average versus Grok-4 and a claimed ~98% reduction in cost to achieve the same benchmark performance (on the metrics xAI reports).

- Native tool / browsing integration: trained end-to-end with tool-use RL for web/X browsing, code execution and agentic search behaviors.

- Multimodal & function calling: supports images and structured outputs; function calling and structured response formats are supported in the API.

Technical details

Unified reasoning architecture: Grok-4-Fast uses a single model weightbase that can be steered into reasoning (long chain-of-thought) or non-reasoning (fast replies) behavior through system prompts or variant selection, rather than shipping two entirely separate backbone models. This reduces switching latency and token cost for mixed workloads.

Reinforcement learning for intelligence density: xAI reports using large-scale reinforcement learning focused on intelligence density (maximizing performance per token), which is the basis for the stated token-efficiency gains.

Tool conditioning and agentic search: Grok-4-Fast was trained and evaluated on tasks that require invoking tools (web browsing, X search, code execution). The model is presented as adept at choosing when to call tools and how to stitch browsing evidence into answers.

Benchmark performance

Improvements in BrowseComp (44.9% pass\@1 vs 43.0% for Grok-4), SimpleQA (95.0% vs 94.0%), and large gains in certain Chinese-language browsing/search arenas. xAI also reports a top ranking in LMArena’s Search Arena for a grok-4-fast-search variant.

Typical & recommended use cases

- High-throughput search and retrieval — search agents that need fast multi-hop web reasoning.

- Agentic assistants & bots — agents that combine browsing, code execution, and asynchronous tool calls (where allowed).

- Cost-sensitive production deployments — services that require many calls and want improved token-to-utility economics versus a heavier base model.

- Developer experimentation — prototyping multimodal or web-augmented flows that rely on fast, repeated queries.

- How to access Grok 4 fast API

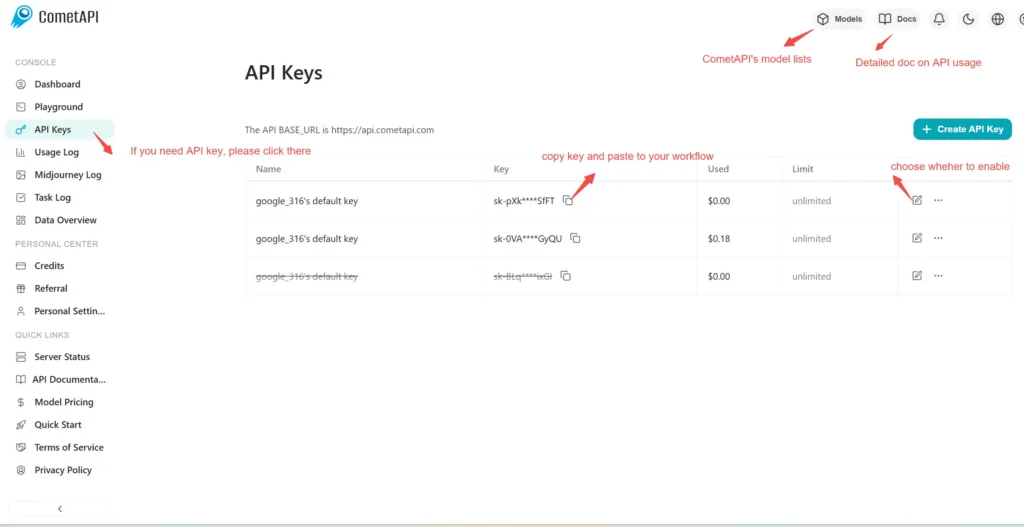

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to Grok 4 fast API

Select the “\grok-4-fast-reasoning/ grok-4-fast-non-reasoning\” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account. base url is Chat format(https://api.cometapi.com/v1/chat/completions).

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.