Claude Opus 4.7, Anthropic’s latest flagship hybrid reasoning model, is now available. Released in mid-April 2026, it delivers a step-change in agentic software engineering, long-horizon reasoning, and multimodal understanding while retaining the full 1-million-token context window introduced in Opus 4.6. Early benchmarks show a 13% lift on Anthropic’s internal 93-task coding evaluation, 3× more resolved production tasks on Rakuten-SWE-Bench, and 70% clearance on CursorBench—clearly outperforming its predecessor.

For developers, enterprises, and AI builders seeking frontier performance at scale, Claude Opus 4.7 is now live on CometAPI—the unified AI gateway that already powers access to 500+ models from Anthropic, OpenAI, Google, and others at up to 20% lower cost than direct Anthropic pricing. Whether you’re building autonomous coding agents, processing enterprise documents at scale, or orchestrating multi-tool workflows, Opus 4.7 sets a new standard. And CometAPI makes it instantly accessible, cost-effective, and future-proof.

What Is Claude Opus 4.7?

Claude Opus 4.7 is Anthropic’s most capable generally available model as of April 2026. It is a hybrid reasoning large language model optimized for complex, long-running tasks that previous models could not reliably complete. Key specifications include:

Key technical specifications include:

- 1 million token context window (equivalent to ~1,500 pages of text), enabling it to maintain coherence across massive codebases, long documents, or multi-session agent workflows.

- Hybrid/adaptive reasoning: The model automatically scales its “thinking” effort based on task complexity—quick responses for simple queries, deeper analysis for challenging ones—without requiring manual prompting for extended thinking (a change from prior versions).

- Multimodal vision: Supports images up to 2,576 pixels on the long edge (~3.75 megapixels), more than 3× the resolution of previous Claude models. This unlocks superior performance on screenshots, diagrams, charts, and visual data extraction.

- Output capabilities: Up to 128k tokens per response, with improved instruction-following, self-verification, and error recovery.

Anthropic positions Opus 4.7 as the go-to model for “frontier intelligence” where reliability matters most—think senior-level software engineering, financial analysis, legal document reasoning, and autonomous AI agents that run for hours or days with minimal human oversight. It is not Anthropic’s absolute most powerful internal model (that distinction belongs to the restricted Claude Mythos Preview, used in Project Glasswing for cybersecurity), but it is the strongest model broadly available to developers and enterprises.

Key Features of Claude Opus 4.7

1. Adaptive Hybrid Reasoning and Self-Correction**

The model dynamically adjusts reasoning effort. For complex tasks, it engages deeper chain-of-thought internally before responding. It also “catches its own mistakes during planning” and exhibits stronger deductive logic where Opus 4.6 previously struggled. This reduces hallucinations and improves calibration — the model is more honest about its limits and reports missing data instead of fabricating fallbacks.

2. High-Resolution Vision & Multimodal Understanding

Supports images up to 2,576 pixels on the long edge (~3.75 megapixels)—more than 3× previous models. Excels at dense screenshots, technical diagrams, chemical structures, and slide decks. Agentic Autonomy and Memory Across Sessions:

- Stronger role fidelity and instruction-following in multi-agent coordination.

- Drives long-running workflows with minimal oversight.

- Uses memory to learn across multi-day or multi-session projects.

- Excels at async automations, CI/CD pipelines, and orchestrating multiple tools.

- Improved error recovery, loop resistance, and graceful degradation when tools fail.

3. Enhanced AI Agents & Long-Horizon Workflows

Improved loop resistance, graceful error recovery, and tool-use reliability. Supports task budgets (public beta) and better coordination in multi-agent setups. The new “xhigh” effort level gives developers precise control over speed vs. depth.

4. Advanced Software Engineering & Agentic Coding

Opus 4.7 is optimized for large codebases, multi-file refactoring, and sustained agent workflows. It catches logical faults early, fixes its own code, and maintains coherence over hours-long sessions. New file-system memory helps it remember notes across multi-session projects.

Higher-quality outputs for interfaces, slides, docs, and spreadsheets. More “tasteful and creative” while staying strictly on-instruction. 21% fewer document reasoning errors on enterprise benchmarks.

5. Safety & Enterprise-Ready Controls

Cyber-risk safeguards, US-only inference option (1.1× pricing), and strong resistance to prompt injection. Ideal for regulated industries.

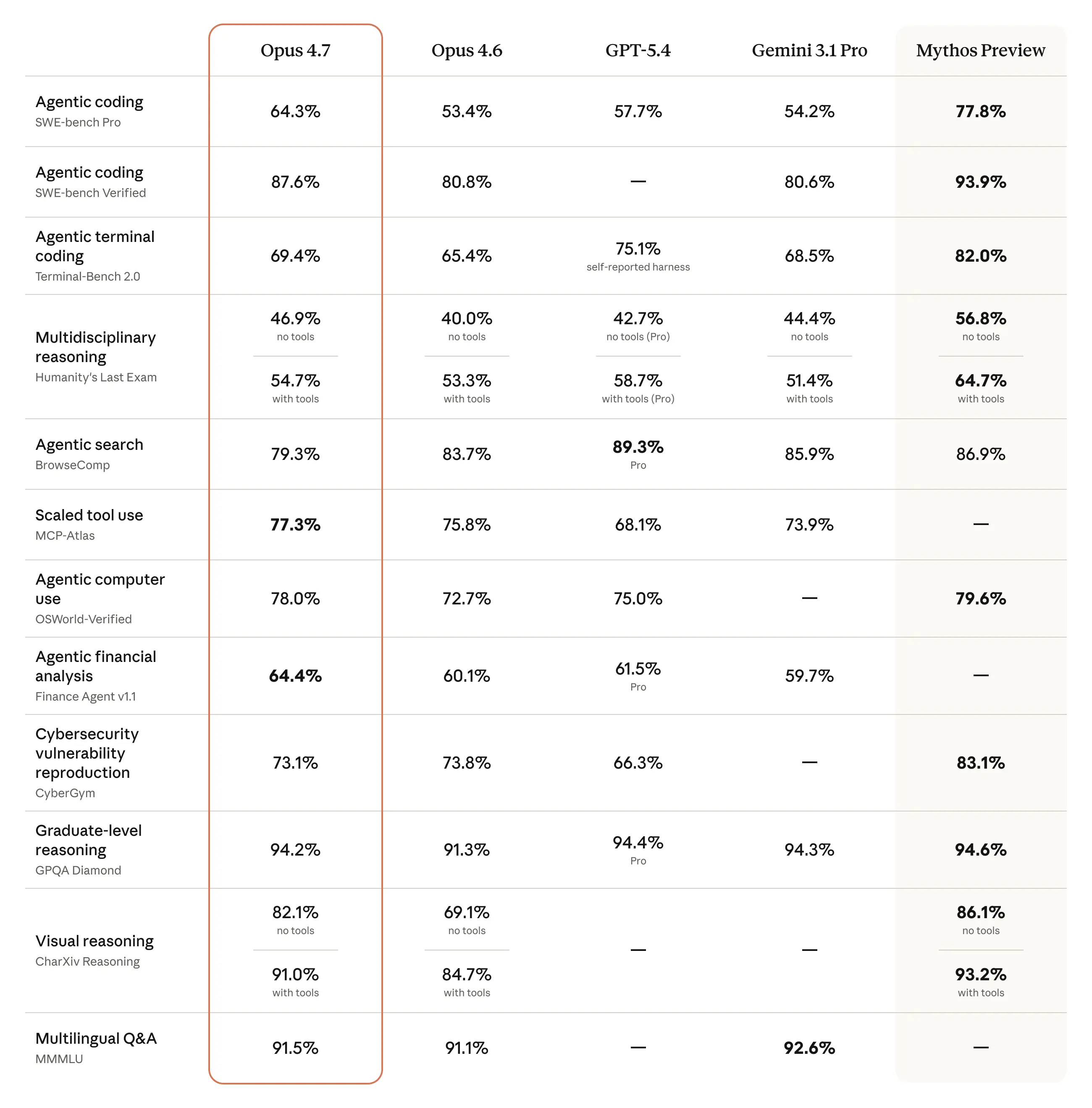

Performance Benchmarks: Data-Backed Proof of Superiority

Anthropic and third-party evaluations confirm Opus 4.7 sets new records in coding, agentic tasks, and knowledge work. Here are the key benchmarks (sourced directly from Anthropic’s April 16, 2026 announcement):

Additional highlights:

- TBench: Passed three tasks previous models failed, including race-condition fixes.

- BigLaw Bench (Harvey): 90.9% accuracy at high effort, with better calibration on ambiguous legal edits.

- CyberGym & SWE-bench Multimodal: Strong gains while maintaining safety guardrails.

Key takeaways from the data:

- Coding & agentic performance: The 13% lift on the 93-task benchmark is particularly meaningful because it includes tasks that neither Opus 4.6 nor Sonnet 4.6 could solve. On Rakuten-SWE-Bench, the 3× production-task resolution rate directly translates to fewer human interventions in real engineering workflows.

- Visual & multimodal leap: The jump from 54.5% to 98.5% on visual-acuity benchmarks enables reliable interpretation of complex diagrams, UI screenshots, and scientific figures—critical for design-to-code pipelines and technical documentation.

- Efficiency gains: Opus 4.7 achieves higher success rates with fewer tokens and lower latency on medium-complexity tasks thanks to adaptive thinking. Low-effort mode on 4.7 roughly matches medium-effort 4.6 while consuming less compute.

Independent SWE-Bench Verified leaderboards (as of April 2026) place Claude-family models at the top, with Opus-class performance routinely exceeding 75-80% resolution when paired with agent scaffolds like Claude Code. Opus 4.7’s improvements compound these results further for long-running, multi-file projects.

These gains stem from training refinements that emphasize rigor, self-verification, and long-horizon consistency—making Opus 4.7 particularly valuable for production environments where hallucinations or incomplete work are costly.

Claude Opus 4.7 Pricing

Official Anthropic Pricing (April 2026):

- Input: $5 per million tokens

- Output: $25 per million tokens

- Prompt caching: up to 90% savings on repeated context

- Batch API: 50% off

- US-only inference: 1.1× multiplier

- Long-context (>200K) may incur surcharges on some legacy paths, but 1M is standard for 4.7.

CometAPI Pricing: Save Up to 20% with Unified Access

- Input: $4 per million tokens

- Output: $20 per million tokens

That’s a 20% savings across the board — plus CometAPI’s smart routing, volume discounts, and pay-as-you-go model with no minimums. Prompt caching and batch efficiencies carry over seamlessly.

For high-volume users, the difference compounds quickly: a project consuming 10 million tokens monthly saves $10,000+ annually on CometAPI versus direct Anthropic billing.

Comparison Table: Claude Opus 4.7 Pricing Options

| Provider | Input ($/M) | Output ($/M) | Prompt Caching | Unified 500+ Models | Best For |

|---|---|---|---|---|---|

| Anthropic Direct | $5 | $25 | Up to 90% | No | Native Claude ecosystem |

| CometAPI | $4 | $20 | Full support | Yes | Cost savings + simplicity |

| AWS Bedrock | $5 | $25 | Supported | Limited | Enterprise compliance |

| Google Vertex | $5 | $25 | Supported | Limited | Google Cloud users |

CometAPI also offers pay-as-you-go, usage analytics, privacy (no data retention), and an interactive Playground for side-by-side testing—perfect for prototyping before scaling.

How to Access Claude Opus 4.7 via CometAPI (Step-by-Step)

While Opus 4.7 is available directly through claude.ai (Pro/Max/Team/Enterprise plans) and the official Claude API / Bedrock / Vertex AI / Foundry, many developers prefer CometAPI for immediate, affordable, and unified access.

CometAPI delivers the fastest and most economical way to integrate Opus 4.7 into your applications. Here’s exactly how:

- One-line migration: Swap from

claude-opus-4-6toclaude-opus-4-7in your code—no endpoint changes required. - Sign up for free at CometAPI and generate your API key in under 60 seconds.

- Use the unified endpoint—no need to change providers or manage separate Anthropic credentials. Simply set

model: "claude-opus-4-7"(or the alias if available). - Pricing advantage: While official Anthropic pricing is $5 input / $25 output per million tokens, CometAPI historically offers Claude Opus-class models at ~20% lower rates (e.g., Opus 4.6 at $4/$20).

- SDK & tools: Official SDKs for Python, Node.js, plus Postman collections, interactive Playground, and built-in A/B testing between models.

CometAPI exposes Opus 4.7 through the same style of Messages API that many Anthropic users already know. Its model id as claude-opus-4-7, lists the endpoint as /v1/messages, and provides sample code for Python, JavaScript, and curl. CometAPI also notes a claude-opus-4-7-thinking snapshot in its versions section.

A minimal integration looks like this:

import anthropic

import os

COMETAPI_KEY = os.environ.get("COMETAPI_KEY") or "<YOUR_COMETAPI_KEY>"

BASE_URL = "https://api.cometapi.com"

client = anthropic.Anthropic(

base_url=BASE_URL,

api_key=COMETAPI_KEY,

)

message = client.messages.create(

model="claude-opus-4-7",

max_tokens=1024,

messages=[{"role": "user", "content": "Hello, Claude"}],

)

print(message.content[0].text)

The same pattern works in JavaScript, and CometAPI’s curl example also uses model: "claude-opus-4-7" against /v1/messages. For teams already using the Anthropic SDK, this makes the migration path very simple: keep the SDK, switch the base URL, and select the model id you want.

How to access Opus 4.6 via CometAPI, if you still need the older version

If your production environment is already tuned to Opus 4.6, CometAPI also lists claude-opus-4-6 as an available model. Its page describes Opus 4.6 as Anthropic’s Opus-class model for knowledge work and research workflows, with the same CometAPI price structure shown for Opus 4.7. That makes version pinning easy when you need a stable baseline for A/B testing or gradual rollout.

My practical recommendation is this: use Opus 4.7 for new builds, use Opus 4.6 only for controlled comparisons or temporary compatibility, and run a prompt regression test before switching production traffic. That advice follows from Anthropic’s own warning that 4.7 is more literal with instructions and may change how older prompts behave.

Real-World Use Cases & Recommendations

- Software engineering teams: Hand off your hardest GitHub issues—Opus 4.7 resolves 3× more production tasks on Rakuten-SWE-Bench.

- AI agent builders: Build reliable, long-running automations with built-in memory and error recovery.

- Enterprise knowledge workers: Process dense documents, spreadsheets, and slide decks with 21% fewer errors.

- Creative & design teams: Generate high-quality interfaces and presentations from natural language + high-res images.

CometAPI recommendation: Start with low-effort mode for prototyping, then switch to adaptive or high-effort for final validation. Combine with CometAPI’s model router to automatically fallback to Sonnet 4.6 on simpler subtasks—maximizing both quality and cost efficiency.Most users see 15–30% cost reduction and 2–3× productivity gains in agentic coding within the first week.

Comparison Table: Opus 4.7 vs. Previous Flagships

| Model | SWE-Bench Verified (approx.) | Vision Resolution | Context Window | Pricing (In/Out) | Best Strength |

|---|---|---|---|---|---|

| Opus 4.7 | ~87–88% (projected from lifts) | 2,576 px | 1M | $4/$20 (CometAPI) | Agentic coding + vision |

| Opus 4.6 | 80.8% | ~800 px | 1M | $5/$25 | Strong baseline |

| GPT-5.4 | ~80% | High | 1M+ | Higher | Structured reasoning |

| Gemini 3.1 Pro | 80.6% | Excellent | 2M | Competitive | Multimodal scale |

While GPT-5.4 may edge synthetic puzzles, Opus 4.7 dominates real-world SWE-bench, agentic reliability, and multimodal tasks. Gemini offers speed but trails in deep reasoning depth. CometAPI lets you access all of them side-by-side for the best hybrid workflows.

Conclusion:

Claude Opus 4.7 isn’t just another incremental release—it’s a practical leap in what frontier AI can reliably deliver for coding, agents, and professional workflows. With concrete benchmark lifts, higher-resolution vision, and enterprise-grade safety, it’s ready for production today.

By accessing it through CometAPI, you get the same model at 20% lower cost, unified infrastructure, and zero friction. Whether you’re a solo developer prototyping agents or an enterprise team automating complex pipelines, CometAPI makes Opus 4.7 the most cost-effective and developer-friendly choice.

Ready to try it?

Head to CometAPI.com, grab your free API key, and switch your model parameter to claude-opus-4-7 today. Your next breakthrough project is just one API call away.